27. Biological Monitoring

Chapter Editor: Robert Lauwerys

Table of Contents

Tables and Figures

General Principles

Vito Foà and Lorenzo Alessio

Quality Assurance

D. Gompertz

Metals and Organometallic Compounds

P. Hoet and Robert Lauwerys

Organic Solvents

Masayuki Ikeda

Genotoxic Chemicals

Marja Sorsa

Pesticides

Marco Maroni and Adalberto Ferioli

Tables

Click a link below to view table in article context.

1. ACGIH, DFG & other limit values for metals

2. Examples of chemicals & biological monitoring

3. Biological monitoring for organic solvents

4. Genotoxicity of chemicals evaluated by IARC

5. Biomarkers & some cell/tissue samples & genotoxicity

6. Human carcinogens, occupational exposure & cytogenetic end points

8. Exposure from production & use of pesticides

9. Acute OP toxicity at different levels of ACHE inhibition

10. Variations of ACHE & PCHE & selected health conditions

11. Cholinesterase activities of unexposed healthy people

12. Urinary alkyl phosphates & OP pesticides

13. Urinary alkyl phosphates measurements & OP

14. Urinary carbamate metabolites

15. Urinary dithiocarbamate metabolites

16. Proposed indices for biological monitoring of pesticides

17. Recommended biological limit values (as of 1996)

Figures

Point to a thumbnail to see figure caption, click to see figure in article context.

General Principles

Basic Concepts and Definitions

At the worksite, industrial hygiene methodologies can measure and control only airborne chemicals, while other aspects of the problem of possible harmful agents in the environment of workers, such as skin absorption, ingestion, and non-work-related exposure, remain undetected and therefore uncontrolled. Biological monitoring helps fill this gap.

Biological monitoring was defined in a 1980 seminar, jointly sponsored by the European Economic Community (EEC), National Institute for Occupational Safety and Health (NIOSH) and Occupational Safety and Health Association (OSHA) (Berlin, Yodaiken and Henman 1984) in Luxembourg as “the measurement and assessment of agents or their metabolites either in tissues, secreta, excreta, expired air or any combination of these to evaluate exposure and health risk compared to an appropriate reference”. Monitoring is a repetitive, regular and preventive activity designed to lead, if necessary, to corrective actions; it should not be confused with diagnostic procedures.

Biological monitoring is one of the three important tools in the prevention of diseases due to toxic agents in the general or occupational environment, the other two being environmental monitoring and health surveillance.

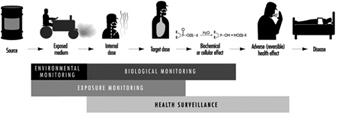

The sequence in the possible development of such disease may be schematically represented as follows: source-exposed chemical agent—internal dose—biochemical or cellular effect (reversible) —health effects—disease. The relationships among environmental, biological, and exposure monitoring, and health surveillance, are shown in figure 1.

Figure 1. The relationship between environmental, biological and exposure monitoring, and health surveillance

When a toxic substance (an industrial chemical, for example) is present in the environment, it contaminates air, water, food, or surfaces in contact with the skin; the amount of toxic agent in these media is evaluated via environmental monitoring.

As a result of absorption, distribution, metabolism, and excretion, a certain internal dose of the toxic agent (the net amount of a pollutant absorbed in or passed through the organism over a specific time interval) is effectively delivered to the body, and becomes detectable in body fluids. As a result of its interaction with a receptor in the critical organ (the organ which, under specific conditions of exposure, exhibits the first or the most important adverse effect), biochemical and cellular events occur. Both the internal dose and the elicited biochemical and cellular effects may be measured through biological monitoring.

Health surveillance was defined at the above-mentioned 1980 EEC/NIOSH/OSHA seminar as “the periodic medico-physiological examination of exposed workers with the objective of protecting health and preventing disease”.

Biological monitoring and health surveillance are parts of a continuum that can range from the measurement of agents or their metabolites in the body via evaluation of biochemical and cellular effects, to the detection of signs of early reversible impairment of the critical organ. The detection of established disease is outside the scope of these evaluations.

Goals of Biological Monitoring

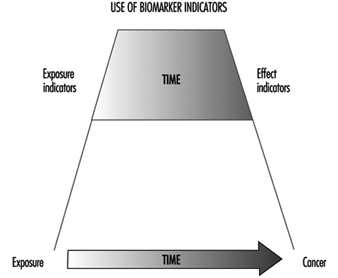

Biological monitoring can be divided into (a) monitoring of exposure, and (b) monitoring of effect, for which indicators of internal dose and of effect are used respectively.

The purpose of biological monitoring of exposure is to assess health risk through the evaluation of internal dose, achieving an estimate of the biologically active body burden of the chemical in question. Its rationale is to ensure that worker exposure does not reach levels capable of eliciting adverse effects. An effect is termed “adverse” if there is an impairment of functional capacity, a decreased ability to compensate for additional stress, a decreased ability to maintain homeostasis (a stable state of equilibrium), or an enhanced susceptibility to other environmental influences.

Depending on the chemical and the analysed biological parameter, the term internal dose may have different meanings (Bernard and Lauwerys 1987). First, it may mean the amount of a chemical recently absorbed, for example, during a single workshift. A determination of the pollutant’s concentration in alveolar air or in the blood may be made during the workshift itself, or as late as the next day (samples of blood or alveolar air may be taken up to 16 hours after the end of the exposure period). Second, in the case that the chemical has a long biological half-life—for example, metals in the bloodstream—the internal dose could reflect the amount absorbed over a period of a few months.

Third, the term may also mean the amount of chemical stored. In this case it represents an indicator of accumulation which can provide an estimate of the concentration of the chemical in organs and/or tissues from which, once deposited, it is only slowly released. For example, measurements of DDT or PCB in blood could provide such an estimate.

Finally, an internal dose value may indicate the quantity of the chemical at the site where it exerts its effects, thus providing information about the biologically effective dose. One of the most promising and important uses of this capability, for example, is the determination of adducts formed by toxic chemicals with protein in haemoglobin or with DNA.

Biological monitoring of effects is aimed at identifying early and reversible alterations which develop in the critical organ, and which, at the same time, can identify individuals with signs of adverse health effects. In this sense, biological monitoring of effects represents the principal tool for the health surveillance of workers.

Principal Monitoring Methods

Biological monitoring of exposure is based on the determination of indicators of internal dose by measuring:

- the amount of the chemical, to which the worker is exposed, in blood or urine (rarely in milk, saliva, or fat)

- the amount of one or more metabolites of the chemical involved in the same body fluids

- the concentration of volatile organic compounds (solvents) in alveolar air

- the biologically effective dose of compounds which have formed adducts to DNA or other large molecules and which thus have a potential genotoxic effect.

Factors affecting the concentration of the chemical and its metabolites in blood or urine will be discussed below.

As far as the concentration in alveolar air is concerned, besides the level of environmental exposure, the most important factors involved are solubility and metabolism of the inhaled substance, alveolar ventilation, cardiac output, and length of exposure (Brugnone et al. 1980).

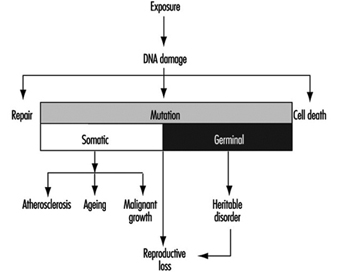

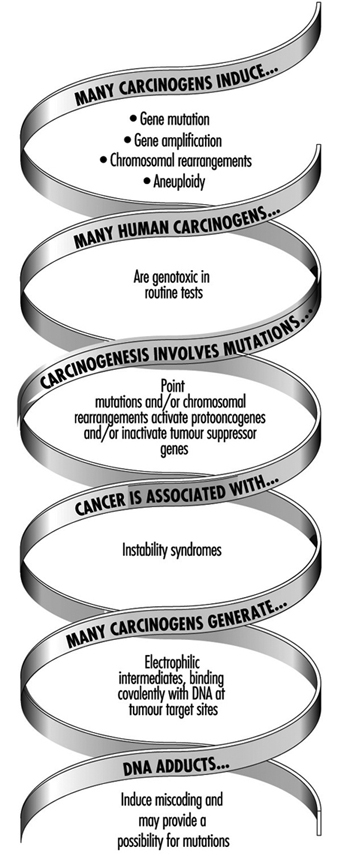

The use of DNA and haemoglobin adducts in monitoring human exposure to substances with carcinogenic potential is a very promising technique for measurement of low level exposures. (It should be noted, however, that not all chemicals that bind to macromolecules in the human organism are genotoxic, i.e., potentially carcinogenic.) Adduct formation is only one step in the complex process of carcinogenesis. Other cellular events, such as DNA repair promotion and progression undoubtedly modify the risk of developing a disease such as cancer. Thus, at the present time, the measurement of adducts should be seen as being confined only to monitoring exposure to chemicals. This is discussed more fully in the article “Genotoxic chemicals” later in this chapter.

Biological monitoring of effects is performed through the determination of indicators of effect, that is, those that can identify early and reversible alterations. This approach may provide an indirect estimate of the amount of chemical bound to the sites of action and offers the possibility of assessing functional alterations in the critical organ in an early phase.

Unfortunately, we can list only a few examples of the application of this approach, namely, (1) the inhibition of pseudocholinesterase by organophosphate insecticides, (2) the inhibition of d-aminolaevulinic acid dehydratase (ALA-D) by inorganic lead, and (3) the increased urinary excretion of d-glucaric acid and porphyrins in subjects exposed to chemicals inducing microsomal enzymes and/or to porphyrogenic agents (e.g., chlorinated hydrocarbons).

Advantages and Limitations of Biological Monitoring

For substances that exert their toxicity after entering the human organism, biological monitoring provides a more focused and targeted assessment of health risk than does environmental monitoring. A biological parameter reflecting the internal dose brings us one step closer to understanding systemic adverse effects than does any environmental measurement.

Biological monitoring offers numerous advantages over environmental monitoring and in particular permits assessment of:

- exposure over an extended time period

- exposure as a result of worker mobility in the working environment

- absorption of a substance via various routes, including the skin

- overall exposure as a result of different sources of pollution, both occupational and non-occupational

- the quantity of a substance absorbed by the subject depending on factors other than the degree of exposure, such as the physical effort required by the job, ventilation, or climate

- the quantity of a substance absorbed by a subject depending on individual factors that can influence the toxicokinetics of the toxic agent in the organism; for example, age, sex, genetic features, or functional state of the organs where the toxic substance undergoes biotransformation and elimination.

In spite of these advantages, biological monitoring still suffers today from considerable limitations, the most significant of which are the following:

- The number of possible substances which can be monitored biologically is at present still rather small.

- In the case of acute exposure, biological monitoring supplies useful information only for exposure to substances that are rapidly metabolized, for example, aromatic solvents.

- The significance of biological indicators has not been clearly defined; for example, it is not always known whether the levels of a substance measured on biological material reflect current or cumulative exposure (e.g., urinary cadmium and mercury).

- Generally, biological indicators of internal dose allow assessment of the degree of exposure, but do not furnish data that will measure the actual amount present in the critical organ

- Often there is no knowledge of possible interference in the metabolism of the substances being monitored by other exogenous substances to which the organism is simultaneously exposed in the working and general environment.

- There is not always sufficient knowledge on the relationships existing between the levels of environmental exposure and the levels of the biological indicators on the one hand, and between the levels of the biological indicators and possible health effects on the other.

- The number of biological indicators for which biological exposure indices (BEIs) exist at present is rather limited. Follow-up information is needed to determine whether a substance, presently identified as not capable of causing an adverse effect, may at a later time be shown to be harmful.

- A BEI usually represents a level of an agent that is most likely to be observed in a specimen collected from a healthy worker who has been exposed to the chemical to the same extent as a worker with an inhalation exposure to the TLV (threshold limit value) time-weighted average (TWA).

Information Required for the Development of Methods and Criteria for Selecting Biological Tests

Programming biological monitoring requires the following basic conditions:

- knowledge of the metabolism of an exogenous substance in the human organism (toxicokinetics)

- knowledge of the alterations that occur in the critical organ (toxicodynamics)

- existence of indicators

- existence of sufficiently accurate analytical methods

- possibility of using readily obtainable biological samples on which the indicators can be measured

- existence of dose-effect and dose-response relationships and knowledge of these relationships

- predictive validity of the indicators.

In this context, the validity of a test is the degree to which the parameter under consideration predicts the situation as it really is (i.e., as more accurate measuring instruments would show it to be). Validity is determined by the combination of two properties: sensitivity and specificity. If a test possesses a high sensitivity, this means that it will give few false negatives; if it possesses high specificity, it will give few false positives (CEC 1985-1989).

Relationship between exposure, internal dose and effects

The study of the concentration of a substance in the working environment and the simultaneous determination of the indicators of dose and effect in exposed subjects allows information to be obtained on the relationship between occupational exposure and the concentration of the substance in biological samples, and between the latter and the early effects of exposure.

Knowledge of the relationships between the dose of a substance and the effect it produces is an essential requirement if a programme of biological monitoring is to be put into effect. The evaluation of this dose-effect relationship is based on the analysis of the degree of association existing between the indicator of dose and the indicator of effect and on the study of the quantitative variations of the indicator of effect with every variation of indicator of dose. (See also the chapter Toxicology, for further discussion of dose-related relationships).

With the study of the dose-effect relationship it is possible to identify the concentration of the toxic substance at which the indicator of effect exceeds the values currently considered not harmful. Furthermore, in this way it may also be possible to examine what the no-effect level might be.

Since not all the individuals of a group react in the same manner, it is necessary to examine the dose-response relationship, in other words, to study how the group responds to exposure by evaluating the appearance of the effect compared to the internal dose. The term response denotes the percentage of subjects in the group who show a specific quantitative variation of an effect indicator at each dose level.

Practical Applications of Biological Monitoring

The practical application of a biological monitoring programme requires information on (1) the behaviour of the indicators used in relation to exposure, especially those relating to degree, continuity and duration of exposure, (2) the time interval between end of exposure and measurement of the indicators, and (3) all physiological and pathological factors other than exposure that can alter the indicator levels.

In the following articles the behaviour of a number of biological indicators of dose and effect that are used for monitoring occupational exposure to substances widely used in industry will be presented. The practical usefulness and limits will be assessed for each substance, with particular emphasis on time of sampling and interfering factors. Such considerations will be helpful in establishing criteria for selecting a biological test.

Time of sampling

In selecting the time of sampling, the different kinetic aspects of the chemical must be kept in mind; in particular it is essential to know how the substance is absorbed via the lung, the gastrointestinal tract and the skin, subsequently distributed to the different compartments of the body, biotransformed, and finally eliminated. It is also important to know whether the chemical may accumulate in the body.

With respect to exposure to organic substances, the collection time of biological samples becomes all the more important in view of the different velocity of the metabolic processes involved and consequently the more or less rapid excretion of the absorbed dose.

Interfering Factors

Correct use of biological indicators requires a thorough knowledge of those factors which, although independent of exposure, may nevertheless affect the biological indicator levels. The following are the most important types of interfering factors (Alessio, Berlin and Foà 1987).

Physiological factors including diet, sex and age, for example, can affect results. Consumption of fish and crustaceans may increase the levels of urinary arsenic and blood mercury. In female subjects with the same lead blood levels as males, the erythrocyte protoporphyrin values are significantly higher compared to those of male subjects. The levels of urinary cadmium increase with age.

Among the personal habits that can distort indicator levels, smoking and alcohol consumption are particularly important. Smoking may cause direct absorption of substances naturally present in tobacco leaves (e.g., cadmium), or of pollutants present in the working environment that have been deposited on the cigarettes (e.g., lead), or of combustion products (e.g., carbon monoxide).

Alcohol consumption may influence biological indicator levels, since substances such as lead are naturally present in alcoholic beverages. Heavy drinkers, for example, show higher blood lead levels than control subjects. Ingestion of alcohol can interfere with the biotransformation and elimination of toxic industrial compounds: with a single dose, alcohol can inhibit the metabolism of many solvents, for example, trichloroethylene, xylene, styrene and toluene, because of their competition with ethyl alcohol for enzymes which are essential for the breakdown of both ethanol and solvents. Regular alcohol ingestion can also affect the metabolism of solvents in a totally different manner by accelerating solvent metabolism, presumably due to induction of the microsome oxidizing system. Since ethanol is the most important substance capable of inducing metabolic interference, it is advisable to determine indicators of exposure for solvents only on days when alcohol has not been consumed.

Less information is available on the possible effects of drugs on the levels of biological indicators. It has been demonstrated that aspirin can interfere with the biological transformation of xylene to methylhippuric acid, and phenylsalicylate, a drug widely used as an analgesic, can significantly increase the levels of urinary phenols. The consumption of aluminium-based antacid preparations can give rise to increased levels of aluminium in plasma and urine.

Marked differences have been observed in different ethnic groups in the metabolism of widely used solvents such as toluene, xylene, trichloroethylene, tetrachloroethylene, and methylchloroform.

Acquired pathological states can influence the levels of biological indicators. The critical organ can behave anomalously with respect to biological monitoring tests because of the specific action of the toxic agent as well as for other reasons. An example of situations of the first type is the behaviour of urinary cadmium levels: when tubular disease due to cadmium sets in, urinary excretion increases markedly and the levels of the test no longer reflect the degree of exposure. An example of the second type of situation is the increase in erythrocyte protoporphyrin levels observed in iron-deficient subjects who show no abnormal lead absorption.

Physiological changes in the biological media—urine, for example—on which determinations of the biological indicators are based, can influence the test values. For practical purposes, only spot urinary samples can be obtained from individuals during work, and the varying density of these samples means that the levels of the indicator can fluctuate widely in the course of a single day.

In order to overcome this difficulty, it is advisable to eliminate over-diluted or over-concentrated samples according to selected specific gravity or creatinine values. In particular, urine with a specific gravity below 1010 or higher than 1030 or with a creatinine concentration lower than 0.5 g/l or greater than 3.0 g/l should be discarded. Several authors also suggest adjusting the values of the indicators according to specific gravity or expressing the values according to urinary creatinine content.

Pathological changes in the biological media can also considerably influence the values of the biological indicators. For example, in anaemic subjects exposed to metals (mercury, cadmium, lead, etc.) the blood levels of the metal may be lower than would be expected on the basis of exposure; this is due to the low level of red blood cells that transport the toxic metal in the blood circulation.

Therefore, when determinations of toxic substances or metabolites bound to red blood cells are made on whole blood, it is always advisable to determine the haematocrit, which gives a measure of the percentage of blood cells in whole blood.

Multiple exposure to toxic substances present in the workplace

In the case of combined exposure to more than one toxic substance present at the workplace, metabolic interferences may occur that can alter the behaviour of the biological indicators and thus create serious problems in interpretation. In human studies, interferences have been demonstrated, for example, in combined exposure to toluene and xylene, xylene and ethylbenzene, toluene and benzene, hexane and methyl ethyl ketone, tetrachloroethylene and trichloroethylene.

In particular, it should be noted that when biotransformation of a solvent is inhibited, the urinary excretion of its metabolite is reduced (possible underestimation of risk) whereas the levels of the solvent in blood and expired air increase (possible overestimation of risk).

Thus, in situations in which it is possible to measure simultaneously the substances and their metabolites in order to interpret the degree of inhibitory interference, it would be useful to check whether the levels of the urinary metabolites are lower than expected and at the same time whether the concentration of the solvents in blood and/or expired air is higher.

Metabolic interferences have been described for exposures where the single substances are present in levels close to and sometimes below the currently accepted limit values. Interferences, however, do not usually occur when exposure to each substance present in the workplace is low.

Practical Use of Biological Indicators

Biological indicators can be used for various purposes in occupational health practice, in particular for (1) periodic control of individual workers, (2) analysis of the exposure of a group of workers, and (3) epidemiological assessments. The tests used should possess the features of precision, accuracy, good sensitivity, and specificity in order to minimize the possible number of false classifications.

Reference values and reference groups

A reference value is the level of a biological indicator in the general population not occupationally exposed to the toxic substance under study. It is necessary to refer to these values in order to compare the data obtained through biological monitoring programmes in a population which is presumed to be exposed. Reference values should not be confused with limit values, which generally are the legal limits or guidelines for occupational and environmental exposure (Alessio et al. 1992).

When it is necessary to compare the results of group analyses, the distribution of the values in the reference group and in the group under study must be known because only then can a statistical comparison be made. In these cases, it is essential to attempt to match the general population (reference group) with the exposed group for similar characteristics such as, sex, age, lifestyle and eating habits.

To obtain reliable reference values one must make sure that the subjects making up the reference group have never been exposed to the toxic substances, either occupationally or due to particular conditions of environmental pollution.

In assessing exposure to toxic substances one must be careful not to include subjects who, although not directly exposed to the toxic substance in question, work in the same workplace, since if these subjects are, in fact, indirectly exposed, the exposure of the group may be in consequence underestimated.

Another practice to avoid, although it is still widespread, is the use for reference purposes of values reported in the literature that are derived from case lists from other countries and may often have been collected in regions where different environmental pollution situations exist.

Periodic monitoring of individual workers

Periodic monitoring of individual workers is mandatory when the levels of the toxic substance in the atmosphere of the working environment approach the limit value. Where possible, it is advisable to simultaneously check an indicator of exposure and an indicator of effect. The data thus obtained should be compared with the reference values and the limit values suggested for the substance under study (ACGIH 1993).

Analysis of a group of workers

Analysis of a group becomes mandatory when the results of the biological indicators used can be markedly influenced by factors independent of exposure (diet, concentration or dilution of urine, etc.) and for which a wide range of “normal” values exists.

In order to ensure that the group study will furnish useful results, the group must be sufficiently numerous and homogeneous as regards exposure, sex, and, in the case of some toxic agents, work seniority. The more the exposure levels are constant over time, the more reliable the data will be. An investigation carried out in a workplace where the workers frequently change department or job will have little value. For a correct assessment of a group study it is not sufficient to express the data only as mean values and range. The frequency distribution of the values of the biological indicator in question must also be taken into account.

Epidemiological assessments

Data obtained from biological monitoring of groups of workers can also be used in cross-sectional or prospective epidemiological studies.

Cross-sectional studies can be used to compare the situations existing in different departments of the factory or in different industries in order to set up risk maps for manufacturing processes. A difficulty that may be encountered in this type of application depends on the fact that inter-laboratory quality controls are not yet sufficiently widespread; thus it cannot be guaranteed that different laboratories will produce comparable results.

Prospective studies serve to assess the behaviour over time of the exposure levels so as to check, for example, the efficacy of environmental improvements or to correlate the behaviour of biological indicators over the years with the health status of the subjects being monitored. The results of such long-term studies are very useful in solving problems involving changes over time. At present, biological monitoring is mainly used as a suitable procedure for assessing whether current exposure is judged to be “safe,” but it is as yet not valid for assessing situations over time. A given level of exposure considered safe today may no longer be regarded as such at some point in the future.

Ethical Aspects

Some ethical considerations arise in connection with the use of biological monitoring as a tool to assess potential toxicity. One goal of such monitoring is to assemble enough information to decide what level of any given effect constitutes an undesirable effect; in the absence of sufficient data, any perturbation will be considered undesirable. The regulatory and legal implications of this type of information need to be evaluated. Therefore, we should seek societal discussion and consensus as to the ways in which biological indicators should best be used. In other words, education is required of workers, employers, communities and regulatory authorities as to the meaning of the results obtained by biological monitoring so that no one is either unduly alarmed or complacent.

There must be appropriate communication with the individual upon whom the test has been performed concerning the results and their interpretation. Further, whether or not the use of some indicators is experimental should be clearly conveyed to all participants.

The International Code of Ethics for Occupational Health Professionals, issued by the International Commission on Occupational Health in 1992, stated that “biological tests and other investigations must be chosen from the point of view of their validity for protection of the health of the worker concerned, with due regard to their sensitivity, their specificity and their predictive value”. Use must not be made of tests “which are not reliable or which do not have a sufficient predictive value in relation to the requirements of the work assignment”. (See the chapter Ethical Issues for further discussion and the text of the Code.)

Trends in Regulation and Application

Biological monitoring can be carried out for only a limited number of environmental pollutants on account of the limited availability of appropriate reference data. This imposes important limitations on the use of biological monitoring in evaluating exposure.

The World Health Organization (WHO), for example, has proposed health-based reference values for lead, mercury, and cadmium only. These values are defined as levels in blood and urine not linked to any detectable adverse effect.The American Conference of Governmental Industrial Hygienists (ACGIH) has established biological exposure indices (BEIs) for about 26 compounds; BEIs are defined as “values for determinants which are indicators of the degree of integrated exposure to industrial chemicals” (ACGIH 1995).

Quality assurance

Decisions affecting the health, well-being, and employability of individual workers or an employer’s approach to health and safety issues must be based on data of good quality. This is especially so in the case of biological monitoring data and it is therefore the responsibility of any laboratory undertaking analytical work on biological specimens from working populations to ensure the reliability, accuracy and precision of its results. This responsibility extends from providing suitable methods and guidance for specimen collection to ensuring that the results are returned to the health professional responsible for the care of the individual worker in a suitable form. All these activities are covered by the expression of quality assurance.

The central activity in a quality assurance programme is the control and maintenance of analytical accuracy and precision. Biological monitoring laboratories have often developed in a clinical environment and have taken quality assurance techniques and philosophies from the discipline of clinical chemistry. Indeed, measurements of toxic chemicals and biological effect indicators in blood and urine are essentially no different from those made in clinical chemistry and in clinical pharmacology service laboratories found in any major hospital.

A quality assurance programme for an individual analyst starts with the selection and establishment of a suitable method. The next stage is the development of an internal quality control procedure to maintain precision; the laboratory needs then to satisfy itself of the accuracy of the analysis, and this may well involve external quality assessment (see below). It is important to recognize however, that quality assurance includes more than these aspects of analytical quality control.

Method Selection

There are several texts presenting analytical methods in biological monitoring. Although these give useful guidance, much needs to be done by the individual analyst before data of suitable quality can be produced. Central to any quality assurance programme is the production of a laboratory protocol that must specify in detail those parts of the method which have the most bearing on its reliability, accuracy, and precision. Indeed, national accreditation of laboratories in clinical chemistry, toxicology, and forensic science is usually dependent on the quality of the laboratory’s protocols. Development of a suitable protocol is usually a time-consuming process. If a laboratory wishes to establish a new method, it is often most cost-effective to obtain from an existing laboratory a protocol that has proved its performance, for example, through validation in an established international quality assurance programme. Should the new laboratory be committed to a specific analytical technique, for example gas chromatography rather than high-performance liquid chromatography, it is often possible to identify a laboratory that has a good performance record and that uses the same analytical approach. Laboratories can often be identified through journal articles or through organizers of various national quality assessment schemes.

Internal Quality Control

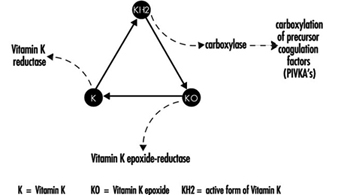

The quality of analytical results depends on the precision of the method achieved in practice, and this in turn depends on close adherence to a defined protocol. Precision is best assessed by the inclusion of “quality control samples” at regular intervals during an analytical run. For example, for control of blood lead analyses, quality control samples are introduced into the run after every six or eight actual worker samples. More stable analytical methods can be monitored with fewer quality control samples per run. The quality control samples for blood lead analysis are prepared from 500 ml of blood (human or bovine) to which inorganic lead is added; individual aliquots are stored at low temperature (Bullock, Smith and Whitehead 1986). Before each new batch is put into use, 20 aliquots are analysed in separate runs on different occasions to establish the mean result for this batch of quality control samples, as well as its standard deviation (Whitehead 1977). These two figures are used to set up a Shewhart control chart (figure 27.2). The results from the analysis of the quality control samples included in subsequent runs are plotted on the chart. The analyst then uses rules for acceptance or rejection of an analytical run depending on whether the results of these samples fall within two or three standard deviations (SD) of the mean. A sequence of rules, validated by computer modelling, has been suggested by Westgard et al. (1981) for application to control samples. This approach to quality control is described in textbooks of clinical chemistry and a simple approach to the introduction of quality assurance is set forth in Whitehead (1977). It must be emphasized that these techniques of quality control depend on the preparation and analysis of quality control samples separately from the calibration samples that are used on each analytical occasion.

Figure 27.2 Shewhart control chart for quality control samples

This approach can be adapted to a range of biological monitoring or biological effect monitoring assays. Batches of blood or urine samples can be prepared by addition of either the toxic material or the metabolite that is to be measured. Similarly, blood, serum, plasma, or urine can be aliquotted and stored deep-frozen or freeze-dried for measurement of enzymes or proteins. However, care has to be taken to avoid infective risk to the analyst from samples based on human blood.

Careful adherence to a well-defined protocol and to rules for acceptability is an essential first stage in a quality assurance programme. Any laboratory must be prepared to discuss its quality control and quality assessment performance with the health professionals using it and to investigate surprising or unusual findings.

External Quality Assessment

Once a laboratory has established that it can produce results with adequate precision, the next stage is to confirm the accuracy (“trueness”) of the measured values, that is, the relationship of the measurements made to the actual amount present. This is a difficult exercise for a laboratory to do on its own but can be achieved by taking part in a regular external quality assessment scheme. These have been an essential part of clinical chemistry practice for some time but have not been widely available for biological monitoring. The exception is blood lead analysis, where schemes have been available since the 1970s (e.g., Bullock, Smith and Whitehead 1986). Comparison of analytical results with those reported from other laboratories analysing samples from the same batch allows assessment of a laboratory’s performance compared with others, as well as a measure of its accuracy. Several national and international quality assessment schemes are available. Many of these schemes welcome new laboratories, as the validity of the mean of the results of an analyte from all the participating laboratories (taken as a measure of the actual concentration) increases with the number of participants. Schemes with many participants are also more able to analyse laboratory performance according to analytical method and thus advise on alternatives to methods with poor performance characteristics. In some countries, participation in such a scheme is an essential part of laboratory accreditation. Guidelines for external quality assessment scheme design and operation have been published by the WHO (1981).

In the absence of established external quality assessment schemes, accuracy may be checked using certified reference materials which are available on a commercial basis for a limited range of analytes. The advantages of samples circulated by external quality assessment schemes are that (1) the analyst does not have fore-knowledge of the result, (2) a range of concentrations is presented, and (3) as definitive analytical methods do not have to be employed, the materials involved are cheaper.

Pre-analytical Quality Control

Effort spent in attaining good laboratory accuracy and precision is wasted if the samples presented to the laboratory have not been taken at the correct time, if they have suffered contamination, have deteriorated during transport, or have been inadequately or incorrectly labelled. It is also bad professional practice to submit individuals to invasive sampling without taking adequate care of the sampled materials. Although sampling is often not under the direct control of the laboratory analyst, a full quality programme of biological monitoring must take these factors into account and the laboratory should ensure that syringes and sample containers provided are free from contamination, with clear instructions about sampling technique and sample storage and transport. The importance of the correct sampling time within the shift or working week and its dependence on the toxicokinetics of the sampled material are now recognized (ACGIH 1993; HSE 1992), and this information should be made available to the health professionals responsible for collecting the samples.

Post-analytical Quality Control

High-quality analytical results may be of little use to the individual or health professional if they are not communicated to the professional in an interpretable form and at the right time. Each biological monitoring laboratory should develop reporting procedures for alerting the health care professional submitting the samples to abnormal, unexpected, or puzzling results in time to allow appropriate action to be taken. Interpretation of laboratory results, especially changes in concentration between successive samples, often depends on knowledge of the precision of the assay. As part of total quality management from sample collection to return of results, health professionals should be given information concerning the biological monitoring laboratory’s precision and accuracy, as well as reference ranges and advisory and statutory limits, in order to help them in interpreting the results.

Metals and organometallic compounds

Toxic metals and organometallic compounds such as aluminium, antimony, inorganic arsenic, beryllium, cadmium, chromium, cobalt, lead, alkyl lead, metallic mercury and its salts, organic mercury compounds, nickel, selenium and vanadium have all been recognized for some time as posing potential health risks to exposed persons. In some cases, epidemiological studies on relationships between internal dose and resulting effect/response in occupationally exposed workers have been studied, thus permitting the proposal of health-based biological limit values (see table 1).

Table 1. Metals: Reference values and biological limit values proposed by the American Conference of Governmental Industrial Hygienists (ACGIH), Deutsche Forschungsgemeinschaft (DFG), and Lauwerys and Hoet (L and H)

|

Metal |

Sample |

Reference1 values* |

ACGIH (BEI) limit2 |

DFG (BAT) limit3 |

L and H limit4 (TMPC) |

|

Aluminium |

Serum/plasma Urine |

<1 μg/100 ml <30 μg/g |

200 μg/l (end of shift) |

150 μg/g (end of shift) |

|

|

Antimony |

Urine |

<1 μg/g |

35 μg/g (end of shift) |

||

|

Arsenic |

Urine (sum of inorganic arsenic and methylated metabolites) |

<10 μg/g |

50 μg/g (end of workweek) |

50 μg/g (if TWA: 0.05 mg/m3 ); 30 μg/g (if TWA: 0.01 mg/m3 ) (end of shift) |

|

|

Beryllium |

Urine |

<2 μg/g |

|||

|

Cadmium |

Blood Urine |

<0.5 μg/100 ml <2 μg/g |

0.5 μg/100 ml 5 μg/g |

1.5 μg/100 ml 15 μg/l |

0.5 μg/100 ml 5 μg/g |

|

Chromium (soluble compounds) |

Serum/plasma Urine |

<0.05 μg/100 ml <5 μg/g |

30 μg/g (end of shift, end of workweek); 10 μg/g (increase during shift) |

30 μg/g (end of shift) |

|

|

Cobalt |

Serum/plasma Blood Urine |

<0.05 μg/100 ml <0.2 μg/100 ml <2 μg/g |

0.1 μg/100 ml (end of shift, end of workweek) 15 μg/l (end of shift, end of workweek) |

0.5 μg/100 ml (EKA)** 60 μg/l (EKA)** |

30 μg/g (end of shift, end of workweek) |

|

Lead |

Blood (lead) ZPP in blood Urine (lead) ALA urine |

<25 μg/100 ml <40 μg/100 ml blood <2.5μg/g Hb <50 μg/g <4.5 mg/g |

30 μg/100 ml (not critical) |

female <45 years: 30 μg/100 ml male: 70 μg/100 ml female <45 years: 6 mg/l; male: 15 mg/l |

40 μg/100 ml 40 μg/100 ml blood or 3 μg/g Hb 50 μg/g 5 mg/g |

|

Manganese |

Blood Urine |

<1 μg/100 ml <3 μg/g |

|||

|

Mercury inorganic |

Blood Urine |

<1 μg/100 ml <5 μg/g |

1.5 μg/100 ml (end of shift, end of workweek) 35 μg/g (preshift) |

5 μg/100 ml 200 μg/l |

2 μg/100 ml (end of shift) 50 μg/g (end of shift) |

|

Nickel (soluble compounds) |

Serum/plasma Urine |

<0.05 μg/100 ml <2 μg/g |

45 μg/l (EKA)** |

30 μg/g |

|

|

Selenium |

Serum/plasma Urine |

<15 μg/100 ml <25 μg/g |

|||

|

Vanadium |

Serum/plasma Blood Urine |

<0.2 μg/100 ml <0.1 μg/100 ml <1 μg/g |

70 μg/g creatinine |

50 μg/g |

* Urine values are per gram of creatinine.

** EKA = Exposure equivalents for carcinogenic materials.

1 Taken with some modifications from Lauwerys and Hoet 1993.

2 From ACGIH 1996-97.

3 From DFG 1996.

4 Tentative maximum permissible concentrations (TMPCs) taken from Lauwerys and Hoet 1993.

One problem in seeking precise and accurate measurements of metals in biological materials is that the metallic substances of interest are often present in the media at very low levels. When biological monitoring consists of sampling and analyzing urine, as is often the case, it is usually performed on “spot” samples; correction of the results for the dilution of urine is thus usually advisable. Expression of the results per gram of creatinine is the method of standardization most frequently used. Analyses performed on too dilute or too concentrated urine samples are not reliable and should be repeated.

Aluminium

In industry, workers may be exposed to inorganic aluminium compounds by inhalation and possibly also by ingestion of dust containing aluminium. Aluminium is poorly absorbed by the oral route, but its absorption is increased by simultaneous intake of citrates. The rate of absorption of aluminium deposited in the lung is unknown; the bioavailability is probably dependent on the physicochemical characteristics of the particle. Urine is the main route of excretion of the absorbed aluminium. The concentration of aluminium in serum and in urine is determined by both the intensity of a recent exposure and the aluminium body burden. In persons non-occupationally exposed, aluminium concentration in serum is usually below 1 μg/100 ml and in urine rarely exceeds 30 μg/g creatinine. In subjects with normal renal function, urinary excretion of aluminium is a more sensitive indicator of aluminium exposure than its concentration in serum/plasma.

Data on welders suggest that the kinetics of aluminium excretion in urine involves a mechanism of two steps, the first one having a biological half-life of about eight hours. In workers who have been exposed for several years, some accumulation of the metal in the body effectively occurs and aluminium concentrations in serum and in urine are also influenced by the aluminium body burden. Aluminium is stored in several compartments of the body and excreted from these compartments at different rates over many years. High accumulation of aluminium in the body (bone, liver, brain) has also been found in patients suffering from renal insufficiency. Patients undergoing dialysis are at risk of bone toxicity and/or encephalopathy when their serum aluminium concentration chronically exceeds 20 μg/100 ml, but it is possible to detect signs of toxicity at even lower concentrations. The Commission of the European Communities has recommended that, in order to prevent aluminium toxicity, the concentration of aluminium in plasma should never exceed 20 μg/100 ml; a level above 10 μg/100 ml should lead to an increased monitoring frequency and health surveillance, and a concentration exceeding 6 μg/100 ml should be considered as evidence of an excessive build-up of the aluminium body burden.

Antimony

Inorganic antimony can enter the organism by ingestion or inhalation, but the rate of absorption is unknown. Absorbed pentavalent compounds are primarily excreted with urine and trivalent compounds via faeces. Retention of some antimony compounds is possible after long-term exposure. Normal concentrations of antimony in serum and urine are probably below 0.1 μg/100 ml and 1 μg/g creatinine, respectively.

A preliminary study on workers exposed to pentavalent antimony indicates that a time-weighted average exposure to 0.5 mg/m3 would lead to an increase in urinary antimony concentration of 35 μg/g creatinine during the shift.

Inorganic Arsenic

Inorganic arsenic can enter the organism via the gastrointestinal and respiratory tracts. The absorbed arsenic is mainly eliminated through the kidney either unchanged or after methylation. Inorganic arsenic is also excreted in the bile as a glutathione complex.

Following a single oral exposure to a low dose of arsenate, 25 and 45% of the administered dose is excreted in urine within one and four days, respectively.

Following exposure to inorganic trivalent or pentavalent arsenic, the urinary excretion consists of 10 to 20% inorganic arsenic, 10 to 20% monomethylarsonic acid, and 60 to 80% cacodylic acid. Following occupational exposure to inorganic arsenic, the proportion of the arsenical species in urine depends on the time of sampling.

The organoarsenicals present in marine organisms are also easily absorbed by the gastrointestinal tract but are excreted for the most part unchanged.

Long-term toxic effects of arsenic (including the toxic effects on genes) result mainly from exposure to inorganic arsenic. Therefore, biological monitoring aims at assessing exposure to inorganic arsenic compounds. For this purpose, the specific determination of inorganic arsenic (Asi), monomethylarsonic acid (MMA), and cacodylic acid (DMA) in urine is the method of choice. However, since seafood consumption might still influence the excretion rate of DMA, the workers being tested should refrain from eating seafood during the 48 hours prior to urine collection.

In persons non-occupationally exposed to inorganic arsenic and who have not recently consumed a marine organism, the sum of these three arsenical species does not usually exceed 10 μg/g urinary creatinine. Higher values can be found in geographical areas where the drinking water contains significant amounts of arsenic.

It has been estimated that in the absence of seafood consumption, a time-weighted average exposure to 50 and 200 μg/m3 inorganic arsenic leads to mean urinary concentrations of the sum of the metabolites (Asi, MMA, DMA) in post-shift urine samples of 54 and 88 μg/g creatinine, respectively.

In the case of exposure to less soluble inorganic arsenic compounds (e.g., gallium arsenide), the determination of arsenic in urine will reflect the amount absorbed but not the total dose delivered to the body (lung, gastrointestinal tract).

Arsenic in hair is a good indicator of the amount of inorganic arsenic absorbed during the growth period of the hair. Organic arsenic of marine origin does not appear to be taken up in hair to the same degree as inorganic arsenic. Determination of arsenic concentration along the length of the hair may provide valuable information concerning the time of exposure and the length of the exposure period. However, the determination of arsenic in hair is not recommended when the ambient air is contaminated by arsenic, as it will not be possible to distinguish between endogenous arsenic and arsenic externally deposited on the hair. Arsenic levels in hair are usually below 1 mg/kg. Arsenic in nails has the same significance as arsenic in hair.

As with urine levels, blood arsenic levels may reflect the amount of arsenic recently absorbed, but the relation between the intensity of arsenic exposure and its concentration in blood has not yet been assessed.

Beryllium

Inhalation is the primary route of beryllium uptake for occupationally exposed persons. Long-term exposure can result in the storage of appreciable amounts of beryllium in lung tissues and in the skeleton, the ultimate site of storage. Elimination of absorbed beryllium occurs mainly via urine and only to a minor degree in the faeces.

Beryllium levels can be determined in blood and urine, but at present these analyses can be used only as qualitative tests to confirm exposure to the metal, since it is not known to what extent the concentrations of beryllium in blood and urine may be influenced by recent exposure and by the amount already stored in the body. Furthermore, it is difficult to interpret the limited published data on the excretion of beryllium in exposed workers, because usually the external exposure has not been adequately characterized and the analytical methods have different sensitivities and precision. Normal urinary and serum levels of beryllium are probably below

2 μg/g creatinine and 0.03 μg/100 ml, respectively.

However, the finding of a normal concentration of beryllium in urine is not sufficient evidence to exclude the possibility of past exposure to beryllium. Indeed, an increased urinary excretion of beryllium has not always been found in workers even though they have been exposed to beryllium in the past and have consequently developed pulmonary granulomatosis, a disease characterized by multiple granulomas, that is, nodules of inflammatory tissue, found in the lungs.

Cadmium

In the occupational setting, absorption of cadmium occurs chiefly through inhalation. However, gastrointestinal absorption may significantly contribute to the internal dose of cadmium. One important characteristic of cadmium is its long biological half-life in the body, exceeding

10 years. In tissues, cadmium is mainly bound to metallothionein. In blood, it is mainly bound to red blood cells. In view of the property of cadmium to accumulate, any biological monitoring programme of population groups chronically exposed to cadmium should attempt to evaluate both the current and the integrated exposure.

By means of neutron activation, it is currently possible to carry out in vivo measurements of the amounts of cadmium accumulated in the main sites of storage, the kidneys and the liver. However, these techniques are not used routinely. So far, in the health surveillance of workers in industry or in large-scale studies on the general population, exposure to cadmium has usually been evaluated indirectly by measuring the metal in urine and blood.

The detailed kinetics of the action of cadmium in humans is not yet fully elucidated, but for practical purposes the following conclusions can be formulated regarding the significance of cadmium in blood and urine. In newly exposed workers, the levels of cadmium in blood increase progressively and after four to six months reach a concentration corresponding to the intensity of exposure. In persons with ongoing exposure to cadmium over a long period, the concentration of cadmium in the blood reflects mainly the average intake during recent months. The relative influence of the cadmium body burden on the cadmium level in the blood may be more important in persons who have accumulated a large amount of cadmium and have been removed from exposure. After cessation of exposure, the cadmium level in blood decreases relatively fast, with an initial half-time of two to three months. Depending on the body burden, the level may, however, remain higher than in control subjects. Several studies in humans and animals have indicated that the level of cadmium in urine can be interpreted as follows: in the absence of acute overexposure to cadmium, and as long as the storage capability of the kidney cortex is not exceeded or cadmium-induced nephropathy has not yet occurred, the level of cadmium in urine increases progressively with the amount of cadmium stored in the kidneys. Under such conditions, which prevail mainly in the general population and in workers moderately exposed to cadmium, there is a significant correlation between urinary cadmium and cadmium in the kidneys. If exposure to cadmium has been excessive, the cadmium-binding sites in the organism become progressively saturated and, despite continuous exposure, the cadmium concentration in the renal cortex levels off.

From this stage on, the absorbed cadmium cannot be further retained in that organ and it is rapidly excreted in the urine. Then at this stage, the concentration of urinary cadmium is influenced by both the body burden and the recent intake. If exposure is continued, some subjects may develop renal damage, which gives rise to a further increase of urinary cadmium as a result of the release of cadmium stored in the kidney and depressed reabsorption of circulating cadmium. However, after an episode of acute exposure, cadmium levels in urine may rapidly and briefly increase without reflecting an increase in the body burden.

Recent studies indicate that metallothionein in urine has the same biological significance. Good correlations have been observed between the urinary concentration of metallothionein and that of cadmium, independently of the intensity of exposure and the status of renal function.

The normal levels of cadmium in blood and in urine are usually below 0.5 μg/100 ml and

2 μg/g creatinine, respectively. They are higher in smokers than in nonsmokers. In workers chronically exposed to cadmium, the risk of renal impairment is negligible when urinary cadmium levels never exceed 10 μg/g creatinine. An accumulation of cadmium in the body which would lead to a urinary excretion exceeding this level should be prevented. However, some data suggest that certain renal markers (whose health significance is still unknown) may become abnormal for urinary cadmium values between 3 and 5 μg/g creatinine, so it seems reasonable to propose a lower biological limit value of 5 μg/g creatinine. For blood, a biological limit of 0.5 μg/100 ml has been proposed for long-term exposure. It is possible, however, that in the case of the general population exposed to cadmium via food or tobacco or in the elderly, who normally suffer a decline of renal function, the critical level in the renal cortex may be lower.

Chromium

The toxicity of chromium is attributable chiefly to its hexavalent compounds. The absorption of hexavalent compounds is relatively higher than the absorption of trivalent compounds. Elimination occurs mainly via urine.

In persons non-occupationally exposed to chromium, the concentration of chromium in serum and in urine usually does not exceed 0.05 μg/100 ml and 2 μg/g creatinine, respectively. Recent exposure to soluble hexavalent chromium salts (e.g., in electroplaters and stainless steel welders) can be assessed by monitoring chromium level in urine at the end of the workshift. Studies carried out by several authors suggest the following relation: a TWA exposure of 0.025 or 0.05 mg/m3 hexavalent chromium is associated with an average concentration at the end of the exposure period of 15 or 30 μg/g creatinine, respectively. This relation is valid only on a group basis. Following exposure to 0.025 mg/m3 hexavalent chromium, the lower 95% confidence limit value is approximately 5 μg/g creatinine. Another study among stainless steel welders has found that a urinary chromium concentration on the order of 40 μg/l corresponds to an average exposure to 0.1 mg/m3 chromium trioxide.

Hexavalent chromium readily crosses cell membranes, but once inside the cell, it is reduced to trivalent chromium. The concentration of chromium in erythrocytes might be an indicator of the exposure intensity to hexavalent chromium during the lifetime of the red blood cells, but this does not apply to trivalent chromium.

To what extent monitoring chromium in urine is useful for health risk estimation remains to be assessed.

Cobalt

Once absorbed, by inhalation and to some extent via the oral route, cobalt (with a biological half-life of a few days) is eliminated mainly with urine. Exposure to soluble cobalt compounds leads to an increase of cobalt concentration in blood and urine.

The concentrations of cobalt in blood and in urine are influenced chiefly by recent exposure. In non-occupationally exposed subjects, urinary cobalt is usually below 2 μg/g creatinine and serum/plasma cobalt below 0.05 μg/100 ml.

For TWA exposures of 0.1 mg/m3 and 0.05 mg/m3, mean urinary levels ranging from about 30 to 75 μg/l and 30 to 40 μg/l, respectively, have been reported (using end-of-shift samples). Sampling time is important as there is a progressive increase in the urinary levels of cobalt during the workweek.

In workers exposed to cobalt oxides, cobalt salts, or cobalt metal powder in a refinery, a TWA of 0.05 mg/m3 has been found to lead to an average cobalt concentration of 33 and 46 μg/g creatinine in the urine collected at the end of the shift on Monday and Friday, respectively.

Lead

Inorganic lead, a cumulative toxin absorbed by the lungs and the gastrointestinal tract, is clearly the metal that has been most extensively studied; thus, of all the metal contaminants, the reliability of methods for assessing recent exposure or body burden by biological methods is greatest for lead.

In a steady-state exposure situation, lead in whole blood is considered to be the best indicator of the concentration of lead in soft tissues and hence of recent exposure. However, the increase of blood lead levels (Pb-B) becomes progressively smaller with increasing levels of lead exposure. When occupational exposure has been prolonged, cessation of exposure is not necessarily associated with a return of Pb-B to a pre-exposure (background) value because of the continuous release of lead from tissue depots. The normal blood and urinary lead levels are generally below 20 μg/100 ml and 50 μg/g creatinine, respectively. These levels may be influenced by the dietary habits and the place of residence of the subjects. The WHO has proposed 40 μg/100 ml as the maximal tolerable individual blood lead concentration for adult male workers, and 30 μg/100 ml for women of child-bearing age. In children, lower blood lead concentrations have been associated with adverse effects on the central nervous system. Lead level in urine increases exponentially with increasing Pb-B and under a steady-state situation is mainly a reflection of recent exposure.

The amount of lead excreted in urine after administration of a chelating agent (e.g., CaEDTA) reflects the mobilizable pool of lead. In control subjects, the amount of lead excreted in urine within 24 hours after intravenous administration of one gram of EDTA usually does not exceed 600 μg. It seems that under constant exposure, chelatable lead values reflect mainly blood and soft tissues lead pool, with only a small fraction derived from bones.

An x-ray fluorescence technique has been developed for measuring lead concentration in bones (phalanges, tibia, calcaneus, vertebrae), but presently the limit of detection of the technique restricts its use to occupationally exposed persons.

Determination of lead in hair has been proposed as a method of evaluating the mobilizable pool of lead. However, in occupational settings, it is difficult to distinguish between lead incorporated endogenously into hair and that simply adsorbed on its surface.

The determination of lead concentration in the circumpulpal dentine of deciduous teeth (baby teeth) has been used to estimate exposure to lead during early childhood.

Parameters reflecting the interference of lead with biological processes can also be used for assessing the intensity of exposure to lead. The biological parameters which are currently used are coproporphyrin in urine (COPRO-U), delta-aminolaevulinic acid in urine (ALA-U), erythrocyte protoporphyrin (EP, or zinc protoporphyrin), delta-aminolaevulinic acid dehydratase (ALA-D), and pyrimidine-5’-nucleotidase (P5N) in red blood cells. In steady-state situations, the changes in these parameters are positively (COPRO-U, ALA-U, EP) or negatively (ALA-D, P5N) correlated with lead blood levels. The urinary excretion of COPRO (mostly the III isomer) and ALA starts to increase when the concentration of lead in blood reaches a value of about 40 μg/100 ml. Erythrocyte protoporphyrin starts to increase significantly at levels of lead in blood of about 35 μg/100 ml in males and 25 μg/100 ml in females. After the termination of occupational exposure to lead, the erythrocyte protoporphyrin remains elevated out of proportion to current levels of lead in blood. In this case, the EP level is better correlated with the amount of chelatable lead excreted in urine than with lead in blood.

Slight iron deficiency will also cause an elevated protoporphyrin concentration in red blood cells. The red blood cell enzymes, ALA-D and P5N, are very sensitive to the inhibitory action of lead. Within the range of blood lead levels of 10 to 40 μg/100 ml, there is a close negative correlation between the activity of both enzymes and blood lead.

Alkyl Lead

In some countries, tetraethyllead and tetramethyllead are used as antiknock agents in automobile fuels. Lead in blood is not a good indicator of exposure to tetraalkyllead, whereas lead in urine seems to be useful for evaluating the risk of overexposure.

Manganese

In the occupational setting, manganese enters the body mainly through the lungs; absorption via the gastrointestinal tract is low and probably depends on a homeostatic mechanism. Manganese elimination occurs through the bile, with only small amounts excreted with urine.

The normal concentrations of manganese in urine, blood, and serum or plasma are usually less than 3 μg/g creatinine, 1 μg/100 ml, and 0.1 μg/100 ml, respectively.

It seems that, on an individual basis, neither manganese in blood nor manganese in urine are correlated to external exposure parameters.

There is apparently no direct relation between manganese concentration in biological material and the severity of chronic manganese poisoning. It is possible that, following occupational exposure to manganese, early adverse central nervous system effects might already be detected at biological levels close to normal values.

Metallic Mercury and its Inorganic Salts

Inhalation represents the main route of uptake of metallic mercury. The gastrointestinal absorption of metallic mercury is negligible. Inorganic mercury salts can be absorbed through the lungs (inhalation of inorganic mercury aerosol) as well as the gastrointestinal tract. The cutaneous absorption of metallic mercury and its inorganic salts is possible.

The biological half-life of mercury is of the order of two months in the kidney but is much longer in the central nervous system.

Inorganic mercury is excreted mainly with the faeces and urine. Small quantities are excreted through salivary, lacrimal and sweat glands. Mercury can also be detected in expired air during the few hours following exposure to mercury vapour. Under chronic exposure conditions there is, at least on a group basis, a relationship between the intensity of recent exposure to mercury vapour and the concentration of mercury in blood or urine. The early investigations, during which static samples were used for monitoring general workroom air, showed that an average mercury-air, Hg–air, concentration of 100 μg/m3 corresponds to average mercury levels in blood (Hg–B) and in urine (Hg–U) of 6 μg Hg/100 ml and 200 to 260 μg/l, respectively. More recent observations, particularly those assessing the contribution of the external micro-environment close to the respiratory tract of the workers, indicate that the air (μg/m3)/urine (μg/g creatinine)/ blood (μg/100ml) mercury relationship is approximately 1/1.2/0.045. Several epidemiological studies on workers exposed to mercury vapour have demonstrated that for long-term exposure, the critical effect levels of Hg–U and Hg–B are approximately 50 μg/g creatinine and 2 μg/100 ml, respectively.

However, some recent studies seem to indicate that signs of adverse effects on the central nervous system or the kidney can already be observed at a urinary mercury level below 50 μg/g creatinine.

Normal urinary and blood levels are generally below 5 μg/g creatinine and 1 μg/100 ml, respectively. These values can be influenced by fish consumption and the number of mercury amalgam fillings in the teeth.

Organic Mercury Compounds

The organic mercury compounds are easily absorbed by all the routes. In blood, they are to be found mainly in red blood cells (around 90%). A distinction must be made, however, between the short chain alkyl compounds (mainly methylmercury), which are very stable and are resistant to biotransformation, and the aryl or alkoxyalkyl derivatives, which liberate inorganic mercury in vivo. For the latter compounds, the concentration of mercury in blood, as well as in urine, is probably indicative of the exposure intensity.

Under steady-state conditions, mercury in whole blood and in hair correlates with methylmercury body burden and with the risk of signs of methylmercury poisoning. In persons chronically exposed to alkyl mercury, the earliest signs of intoxication (paresthesia, sensory disturbances) may occur when the level of mercury in blood and in hair exceeds 20 μg/100 ml and 50 μg/g, respectively.

Nickel

Nickel is not a cumulative toxin and almost all the amount absorbed is excreted mainly via the urine, with a biological half-life of 17 to 39 hours. In non-occupationally exposed subjects, the urine and plasma concentrations of nickel are usually below 2 μg/g creatinine and 0.05 μg/100 ml, respectively.

The concentrations of nickel in plasma and in urine are good indicators of recent exposure to metallic nickel and its soluble compounds (e.g., during nickel electroplating or nickel battery production). Values within normal ranges usually indicate nonsignificant exposure and increased values are indicative of overexposure.

For workers exposed to soluble nickel compounds, a biological limit value of 30 μg/g creatinine (end of shift) has been tentatively proposed for nickel in urine.

In workers exposed to slightly soluble or insoluble nickel compounds, increased levels in body fluids generally indicate significant absorption or progressive release from the amount stored in the lungs; however, significant amounts of nickel may be deposited in the respiratory tract (nasal cavities, lungs) without any significant elevation of its plasma or urine concentration. Therefore, “normal” values have to be interpreted cautiously and do not necessarily indicate absence of health risk.

Selenium

Selenium is an essential trace element. Soluble selenium compounds seem to be easily absorbed through the lungs and the gastrointestinal tract. Selenium is mainly excreted in urine, but when exposure is very high it can also be excreted in exhaled air as dimethylselenide vapour. Normal selenium concentrations in serum and urine are dependent on daily intake, which may vary considerably in different parts of the world but are usually below 15 μg/100 ml and 25 μg/g creatinine, respectively. The concentration of selenium in urine is mainly a reflection of recent exposure. The relationship between the intensity of exposure and selenium concentration in urine has not yet been established.

It seems that the concentration in plasma (or serum) and urine mainly reflects short-term exposure, whereas the selenium content of erythrocytes reflects more long-term exposure.

Measuring selenium in blood or urine gives some information on selenium status. Currently it is more often used to detect a deficiency rather than an overexposure. Since the available data concerning the health risk of long-term exposure to selenium and the relationship between potential health risk and levels in biological media are too limited, no biological threshold value can be proposed.

Vanadium

In industry, vanadium is absorbed mainly via the pulmonary route. Oral absorption seems low (less than 1%). Vanadium is excreted in urine with a biological half-life of about 20 to 40 hours, and to a minor degree in faeces. Urinary vanadium seems to be a good indicator of recent exposure, but the relationship between uptake and vanadium levels in urine has not yet been sufficiently established. It has been suggested that the difference between post-shift and pre-shift urinary concentrations of vanadium permits the assessment of exposure during the workday, whereas urinary vanadium two days after cessation of exposure (Monday morning) would reflect accumulation of the metal in the body. In non-occupationally exposed persons, vanadium concentration in urine is usually below 1 μg/g creatinine. A tentative biological limit value of 50 μg/g creatinine (end of shift) has been proposed for vanadium in urine.

Organic Solvents

Introduction

Organic solvents are volatile and generally soluble in body fat (lipophilic), although some of them, e.g., methanol and acetone, are water soluble (hydrophilic) as well. They have been extensively employed not only in industry but in consumer products, such as paints, inks, thinners, degreasers, dry-cleaning agents, spot removers, repellents, and so on. Although it is possible to apply biological monitoring to detect health effects, for example, effects on the liver and the kidney, for the purpose of health surveillance of workers who are occupationally exposed to organic solvents, it is best to use biological monitoring instead for “exposure” monitoring in order to protect the health of workers from the toxicity of these solvents, because this is an approach sensitive enough to give warnings well before any health effects may occur. Screening workers for high sensitivity to solvent toxicity may also contribute to the protection of their health.

Summary of Toxicokinetics

Organic solvents are generally volatile under standard conditions, although the volatility varies from solvent to solvent. Thus, the leading route of exposure in industrial settings is through inhalation. The rate of absorption through the alveolar wall of the lungs is much higher than that through the digestive tract wall, and a lung absorption rate of about 50% is considered typical for many common solvents such as toluene. Some solvents, for example, carbon disulphide and N,N-dimethylformamide in the liquid state, can penetrate intact human skin in amounts large enough to be toxic.

When these solvents are absorbed, a portion is exhaled in the breath without any biotransformation, but the greater part is distributed in organs and tissues rich in lipids as a result of their lipophilicity. Biotransformation takes place primarily in the liver (and also in other organs to a minor extent), and the solvent molecule becomes more hydrophilic, typically by a process of oxidation followed by conjugation, to be excreted via the kidney into the urine as metabolite(s). A small portion may be eliminated unchanged in the urine.

Thus, three biological materials, urine, blood and exhaled breath, are available for exposure monitoring for solvents from a practical viewpoint. Another important factor in selecting biological materials for exposure monitoring is the speed of disappearance of the absorbed substance, for which the biological half-life, or the time needed for a substance to diminish to one-half its original concentration, is a quantitative parameter. For example, solvents will disappear from exhaled breath much more rapidly than corresponding metabolites from urine, meaning they have a much shorter half-life. Within urinary metabolites, the biological half-life varies depending on how quickly the parent compound is metabolised, so that sampling time in relation to exposure is often of critical importance (see below). A third consideration in choosing a biological material is the specificity of the target chemical to be analysed in relation to the exposure. For example, hippuric acid is a long-used marker of exposure to toluene, but it is not only formed naturally by the body, but can also be derived from non-occupational sources such as some food additives, and is no longer considered a reliable marker when toluene exposure is low (less than 50 cm3/m3). Generally speaking, urinary metabolites have been most widely used as indicators of exposure to various organic solvents. Solvent in blood is analysed as a qualitative measure of exposure because it usually remains in the blood a shorter time and is more reflective of acute exposure, whereas solvent in exhaled breath is difficult to use for estimation of average exposure because the concentration in breath declines so rapidly after cessation of exposure. Solvent in urine is a promising candidate as a measure of exposure, but it needs further validation.

Biological Exposure Tests for Organic Solvents

In applying biological monitoring for solvent exposure, sampling time is important, as indicated above. Table 1 shows recommended sampling times for common solvents in the monitoring of everyday occupational exposure. When the solvent itself is to be analysed, attention should be paid to preventing possible loss (e.g., evaporation into room air) as well as contamination (e.g., dissolving from room air into the sample) during the sample handling process. In case the samples need to be transported to a distant laboratory or to be stored before analysis, care should be exercised to prevent loss. Freezing is recommended for metabolites, whereas refrigeration (but no freezing) in an airtight container without an air space (or more preferably, in a headspace vial) is recommended for analysis of the solvent itself. In chemical analysis, quality control is essential for reliable results (for details, see the article “Quality assurance” in this chapter). In reporting the results, ethics should be respected (see chapter Ethical Issues elsewhere in the Encyclopaedia).

Table 1. Some examples of target chemicals for biological monitoring and sampling time

|

Solvent |

Target chemical |

Urine/blood |

Sampling time1 |

|

Carbon disulphide |

2-Thiothiazolidine-4-carboxylicacid |

Urine |

Th F |

|

N,N-Dimethyl-formamide |

N-Methylformamide |

Urine |

M Tu W Th F |

|

2-Ethoxyethanol and its acetate |

Ethoxyacetic acid |

Urine |

Th F (end of last workshift) |

|

Hexane |

2,4-Hexanedione Hexane |

Urine Blood |

M Tu W Th F confirmation of exposure |

|

Methanol |

Methanol |

Urine |

M Tu W Th F |

|

Styrene |

Mandelic acid Phenylglyoxylic acid Styrene |

Urine Urine Blood |

Th F Th F confirmation of exposure |

|

Toluene |

Hippuric acid o-Cresol Toluene Toluene |

Urine Urine Blood Urine |

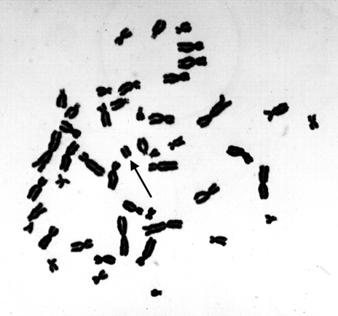

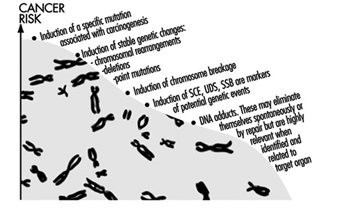

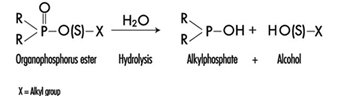

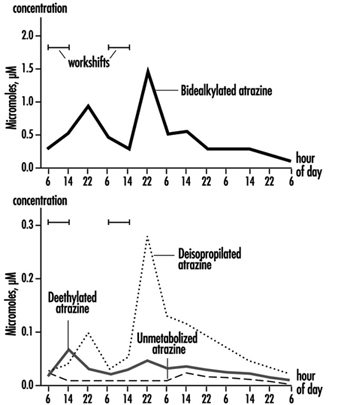

Tu W Th F Tu W Th F confirmation of exposure Tu W Th F |