33. Toxicology

Chapter Editor: Ellen K. Silbergeld

Table of Contents

Tables and Figures

Introduction

Ellen K. Silbergeld, Chapter Editor

General Principles of Toxicology

Definitions and Concepts

Bo Holmberg, Johan Hogberg and Gunnar Johanson

Toxicokinetics

Dušan Djuríc

Target Organ And Critical Effects

Marek Jakubowski

Effects Of Age, Sex And Other Factors

Spomenka Telišman

Genetic Determinants Of Toxic Response

Daniel W. Nebert and Ross A. McKinnon

Mechanisms of Toxicity

Introduction And Concepts

Philip G. Watanabe

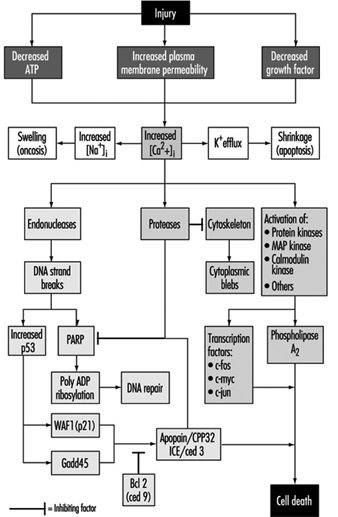

Cellular Injury And Cellular Death

Benjamin F. Trump and Irene K. Berezesky

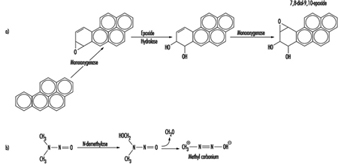

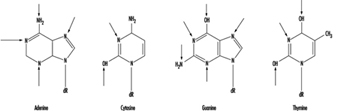

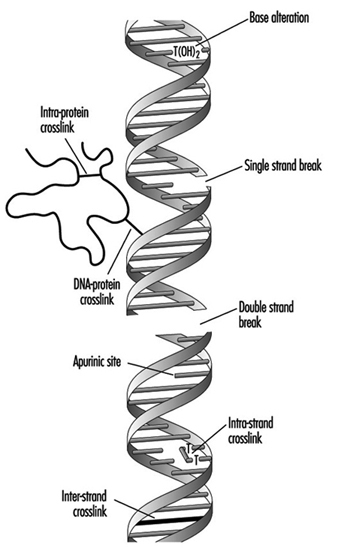

Genetic Toxicology

R. Rita Misra and Michael P. Waalkes

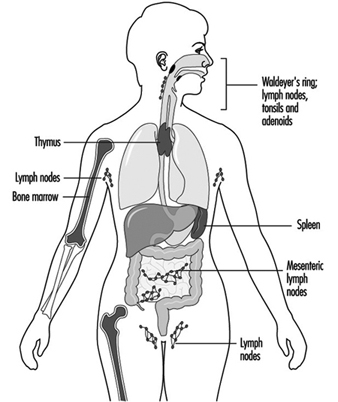

Immunotoxicology

Joseph G. Vos and Henk van Loveren

Target Organ Toxicology

Ellen K. Silbergeld

Toxicology Test Methods

Biomarkers

Philippe Grandjean

Genetic Toxicity Assessment

David M. DeMarini and James Huff

In Vitro Toxicity Testing

Joanne Zurlo

Structure Activity Relationships

Ellen K. Silbergeld

Regulatory Toxicology

Toxicology In Health And Safety Regulation

Ellen K. Silbergeld

Principles Of Hazard Identification - The Japanese Approach

Masayuki Ikeda

The United States Approach to Risk Assessment Of Reproductive Toxicants and Neurotoxic Agents

Ellen K. Silbergeld

Approaches To Hazard Identification - IARC

Harri Vainio and Julian Wilbourn

Appendix - Overall Evaluations of Carcinogenicity to Humans: IARC Monographs Volumes 1-69 (836)

Carcinogen Risk Assessment: Other Approaches

Cees A. van der Heijden

Tables

Click a link below to view table in article context.

- Examples of critical organs & critical effects

- Basic effects of possible multiple interactions of metals

- Haemoglobin adducts in workers exposed to aniline & acetanilide

- Hereditary, cancer-prone disorders & defects in DNA repair

- Examples of chemicals that exhibit genotoxicity in human cells

- Classification of tests for immune markers

- Examples of biomarkers of exposure

- Pros & cons of methods for identifying human cancer risks

- Comparison of in vitro systems for hepatotoxicity studies

- Comparison of SAR & test data: OECD/NTP analyses

- Regulation of chemical substances by laws, Japan

- Test items under the Chemical Substance Control Law, Japan

- Chemical substances & the Chemical Substances Control Law

- Selected major neurotoxicity incidents

- Examples of specialized tests to measure neurotoxicity

- Endpoints in reproductive toxicology

- Comparison of low-dose extrapolations procedures

- Frequently cited models in carcinogen risk characterization

Figures

Point to a thumbnail to see figure caption, click to see figure in article context.

Children categories

Definitions and Concepts

Exposure, Dose and Response

Toxicity is the intrinsic capacity of a chemical agent to affect an organism adversely.

Xenobiotics is a term for “foreign substances”, that is, foreign to the organism. Its opposite is endogenous compounds. Xenobiotics include drugs, industrial chemicals, naturally occurring poisons and environmental pollutants.

Hazard is the potential for the toxicity to be realized in a specific setting or situation.

Risk is the probability of a specific adverse effect to occur. It is often expressed as the percentage of cases in a given population and during a specific time period. A risk estimate can be based upon actual cases or a projection of future cases, based upon extrapolations.

Toxicity rating and toxicity classification can be used for regulatory purposes. Toxicity rating is an arbitrary grading of doses or exposure levels causing toxic effects. The grading can be “supertoxic,” “highly toxic,” “moderately toxic” and so on. The most common ratings concern acute toxicity. Toxicity classification concerns the grouping of chemicals into general categories according to their most important toxic effect. Such categories can include allergenic, neurotoxic, carcinogenic and so on. This classification can be of administrative value as a warning and as information.

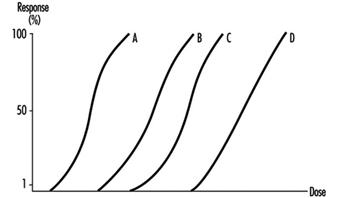

The dose-effect relationship is the relationship between dose and effect on the individual level. An increase in dose may in- crease the intensity of an effect, or a more severe effect may result. A dose-effect curve may be obtained at the level of the whole organism, the cell or the target molecule. Some toxic effects, such as death or cancer, are not graded but are “all or none” effects.

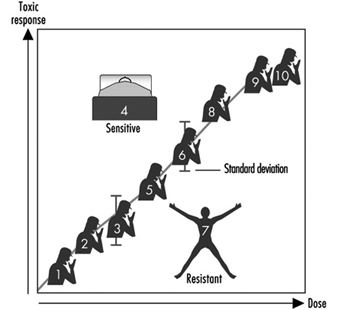

The dose-response relationship is the relationship between dose and the percentage of individuals showing a specific effect. With increasing dose a greater number of individuals in the exposed population will usually be affected.

It is essential to toxicology to establish dose-effect and dose- response relationships. In medical (epidemiological) studies a criterion often used for accepting a causal relationship between an agent and a disease is that effect or response is proportional to dose.

Several dose-response curves can be drawn for a chemical—one for each type of effect. The dose-response curve for most toxic effects (when studied in large populations) has a sigmoid shape. There is usually a low-dose range where there is no response detected; as dose increases, the response follows an ascending curve that will usually reach a plateau at a 100% response. The dose-response curve reflects the variations among individuals in a population. The slope of the curve varies from chemical to chemical and between different types of effects. For some chemicals with specific effects (carcinogens, initiators, mutagens) the dose-response curve might be linear from dose zero within a certain dose range. This means that no threshold exists and that even small doses represent a risk. Above that dose range, the risk may increase at greater than a linear rate.

Variation in exposure during the day and the total length of exposure during one’s lifetime may be as important for the outcome (response) as mean or average or even integrated dose level. High peak exposures may be more harmful than a more even exposure level. This is the case for some organic solvents. On the other hand, for some carcinogens, it has been experimentally shown that the fractionation of a single dose into several exposures with the same total dose may be more effective in producing tumours.

A dose is often expressed as the amount of a xenobiotic entering an organism (in units such as mg/kg body weight). The dose may be expressed in different (more or less informative) ways: exposure dose, which is the air concentration of pollutant inhaled during a certain time period (in work hygiene usually eight hours), or the retained or absorbed dose (in industrial hygiene also called the body burden), which is the amount present in the body at a certain time during or after exposure. The tissue dose is the amount of substance in a specific tissue and the target dose is the amount of substance (usually a metabolite) bound to the critical molecule. The target dose can be expressed as mg chemical bound per mg of a specific macromolecule in the tissue. To apply this concept, information on the mechanism of toxic action on the molecular level is needed. The target dose is more exactly associated with the toxic effect. The exposure dose or body burden may be more easily available, but these are less precisely related to the effect.

In the dose concept a time aspect is often included, even if it is not always expressed. The theoretical dose according to Haber’s law is D = ct, where D is dose, c is concentration of the xenobiotic in the air and t the duration of exposure to the chemical. If this concept is used at the target organ or molecular level, the amount per mg tissue or molecule over a certain time may be used. The time aspect is usually more important for understanding repeated exposures and chronic effects than for single exposures and acute effects.

Additive effects occur as a result of exposure to a combination of chemicals, where the individual toxicities are simply added to each other (1+1= 2). When chemicals act via the same mechanism, additivity of their effects is assumed although not always the case in reality. Interaction between chemicals may result in an inhibition (antagonism), with a smaller effect than that expected from addition of the effects of the individual chemicals (1+1 2). Alternatively, a combination of chemicals may produce a more pronounced effect than would be expected by addition (increased response among individuals or an increase in frequency of response in a population), this is called synergism (1+1 >2).

Latency time is the time between first exposure and the appearance of a detectable effect or response. The term is often used for carcinogenic effects, where tumours may appear a long time after the start of exposure and sometimes long after the cessation of exposure.

A dose threshold is a dose level below which no observable effect occurs. Thresholds are thought to exist for certain effects, like acute toxic effects; but not for others, like carcinogenic effects (by DNA-adduct-forming initiators). The mere absence of a response in a given population should not, however, be taken as evidence for the existence of a threshold. Absence of response could be due to simple statistical phenomena: an adverse effect occurring at low frequency may not be detectable in a small population.

LD50 (effective dose) is the dose causing 50% lethality in an animal population. The LD50 is often given in older literature as a measure of acute toxicity of chemicals. The higher the LD50, the lower is the acute toxicity. A highly toxic chemical (with a low LD50) is said to be potent. There is no necessary correlation between acute and chronic toxicity. ED50 (effective dose) is the dose causing a specific effect other than lethality in 50% of the animals.

NOEL (NOAEL) means the no observed (adverse) effect level, or the highest dose that does not cause a toxic effect. To establish a NOEL requires multiple doses, a large population and additional information to make sure that absence of a response is not merely a statistical phenomenon. LOEL is the lowest observed effective dose on a dose-response curve, or the lowest dose that causes an effect.

A safety factor is a formal, arbitrary number with which one divides the NOEL or LOEL derived from animal experiments to obtain a tentative permissible dose for humans. This is often used in the area of food toxicology, but may be used also in occupational toxicology. A safety factor may also be used for extrapolation of data from small populations to larger populations. Safety factors range from 100 to 103. A safety factor of two may typically be sufficient to protect from a less serious effect (such as irritation) and a factor as large as 1,000 may be used for very serious effects (such as cancer). The term safety factor could be better replaced by the term protection factor or, even, uncertainty factor. The use of the latter term reflects scientific uncertainties, such as whether exact dose-response data can be translated from animals to humans for the particular chemical, toxic effect or exposure situation.

Extrapolations are theoretical qualitative or quantitative estimates of toxicity (risk extrapolations) derived from translation of data from one species to another or from one set of dose-response data (typically in the high dose range) to regions of dose-response where no data exist. Extrapolations usually must be made to predict toxic responses outside the observation range. Mathematical modelling is used for extrapolations based upon an understanding of the behaviour of the chemical in the organism (toxicokinetic modelling) or based upon the understanding of statistical probabilities that specific biological events will occur (biologically or mechanistically based models). Some national agencies have developed sophisticated extrapolation models as a formalized method to predict risks for regulatory purposes. (See discussion of risk assessment later in the chapter.)

Systemic effects are toxic effects in tissues distant from the route of absorption.

Target organ is the primary or most sensitive organ affected after exposure. The same chemical entering the body by different routes of exposure dose, dose rate, sex and species may affect different target organs. Interaction between chemicals, or between chemicals and other factors may affect different target organs as well.

Acute effects occur after limited exposure and shortly (hours, days) after exposure and may be reversible or irreversible.

Chronic effects occur after prolonged exposure (months, years, decades) and/or persist after exposure has ceased.

Acute exposure is an exposure of short duration, while chronic exposure is long-term (sometimes life-long) exposure.

Tolerance to a chemical may occur when repeat exposures result in a lower response than what would have been expected without pretreatment.

Uptake and Disposition

Transport processes

Diffusion. In order to enter the organism and reach a site where damage is produced, a foreign substance has to pass several barriers, including cells and their membranes. Most toxic substances pass through membranes passively by diffusion. This may occur for small water-soluble molecules by passage through aqueous channels or, for fat-soluble ones, by dissolution into and diffusion through the lipid part of the membrane. Ethanol, a small molecule that is both water and fat soluble, diffuses rapidly through cell membranes.

Diffusion of weak acids and bases. Weak acids and bases may readily pass membranes in their non-ionized, fat-soluble form while ionized forms are too polar to pass. The degree of ionization of these substances depends on pH. If a pH gradient exists across a membrane they will therefore accumulate on one side. The urinary excretion of weak acids and bases is highly dependent on urinary pH. Foetal or embryonic pH is somewhat higher than maternal pH, causing a slight accumulation of weak acids in the foetus or embryo.

Facilitated diffusion. The passage of a substance may be facilitated by carriers in the membrane. Facilitated diffusion is similar to enzyme processes in that it is protein mediated, highly selective, and saturable. Other substances may inhibit the facilitated transport of xenobiotics.

Active transport. Some substances are actively transported across cell membranes. This transport is mediated by carrier proteins in a process analogous to that of enzymes. Active transport is similar to facilitated diffusion, but it may occur against a concentration gradient. It requires energy input and a metabolic inhibitor can block the process. Most environmental pollutants are not transported actively. One exception is the active tubular secretion and reabsorption of acid metabolites in the kidneys.

Phagocytosis is a process where specialized cells such as macrophages engulf particles for subsequent digestion. This transport process is important, for example, for the removal of particles in the alveoli.

Bulk flow. Substances are also transported in the body along with the movement of air in the respiratory system during breathing, and the movements of blood, lymph or urine.

Filtration. Due to hydrostatic or osmotic pressure water flows in bulk through pores in the endothelium. Any solute that is small enough will be filtered together with the water. Filtration occurs to some extent in the capillary bed in all tissues but is particularly important in the formation of primary urine in the kidney glomeruli.

Absorption

Absorption is the uptake of a substance from the environment into the organism. The term usually includes not only the entrance into the barrier tissue but also the further transport into circulating blood.

Pulmonary absorption. The lungs are the primary route of deposition and absorption of small airborne particles, gases, vapours and aerosols. For highly water-soluble gases and vapours a significant part of the uptake occurs in the nose and the respiratory tree, but for less soluble substances it primarily takes place in the lung alveoli. The alveoli have a very large surface area (about 100m2 in humans). In addition, the diffusion barrier is extremely small, with only two thin cell layers and a distance in the order of micrometers from alveolar air to systemic blood circulation. This makes the lungs very efficient not only in the exchange of oxygen and carbon dioxide but also of other gases and vapours. In general, the diffusion across the alveolar wall is so rapid that it does not limit the uptake. The absorption rate is instead dependent on flow (pulmonary ventilation, cardiac output) and solubility (blood: air partition coefficient). Another important factor is metabolic elimination. The relative importance of these factors for pulmonary absorption varies greatly for different substances. Physical activity results in increased pulmonary ventilation and cardiac output, and decreased liver blood flow (and, hence, biotransformation rate). For many inhaled substances this leads to a marked increase in pulmonary absorption.

Percutaneous absorption. The skin is a very efficient barrier. Apart from its thermoregulatory role, it is designed to protect the organism from micro-organisms, ultraviolet radiation and other deleterious agents, and also against excessive water loss. The diffusion distance in the dermis is on the order of tenths of millimetres. In addition, the keratin layer has a very high resistance to diffusion for most substances. Nevertheless, significant dermal absorption resulting in toxicity may occur for some substances—highly toxic, fat-soluble substances such as organophosphorous insecticides and organic solvents, for example. Significant absorption is likely to occur after exposure to liquid substances. Percutaneous absorption of vapour may be important for solvents with very low vapour pressure and high affinity to water and skin.

Gastrointestinal absorption occurs after accidental or intentional ingestion. Larger particles originally inhaled and deposited in the respiratory tract may be swallowed after mucociliary transport to the pharynx. Practically all soluble substances are efficiently absorbed in the gastrointestinal tract. The low pH of the gut may facilitate absorption, for instance, of metals.

Other routes. In toxicity testing and other experiments, special routes of administration are often used for convenience, although these are rare and usually not relevant in the occupational setting. These routes include intravenous (IV), subcutaneous (sc), intraperitoneal (ip) and intramuscular (im) injections. In general, substances are absorbed at a higher rate and more completely by these routes, especially after IV injection. This leads to short-lasting but high concentration peaks that may increase the toxicity of a dose.

Distribution

The distribution of a substance within the organism is a dynamic process which depends on uptake and elimination rates, as well as the blood flow to the different tissues and their affinities for the substance. Water-soluble, small, uncharged molecules, univalent cations, and most anions diffuse easily and will eventually reach a relatively even distribution in the body.

Volume of distribution is the amount of a substance in the body at a given time, divided by the concentration in blood, plasma or serum at that time. The value has no meaning as a physical volume, as many substances are not uniformly distributed in the organism. A volume of distribution of less than one l/kg body weight indicates preferential distribution in the blood (or serum or plasma), whereas a value above one indicates a preference for peripheral tissues such as adipose tissue for fat soluble substances.

Accumulation is the build-up of a substance in a tissue or organ to higher levels than in blood or plasma. It may also refer to a gradual build-up over time in the organism. Many xenobiotics are highly fat soluble and tend to accumulate in adipose tissue, while others have a special affinity for bone. For example, calcium in bone may be exchanged for cations of lead, strontium, barium and radium, and hydroxyl groups in bone may be exchanged for fluoride.

Barriers. The blood vessels in the brain, testes and placenta have special anatomical features that inhibit passage of large molecules like proteins. These features, often referred to as blood-brain, blood-testes, and blood-placenta barriers, may give the false impression that they prevent passage of any substance. These barriers are of little or no importance for xenobiotics that can diffuse through cell membranes.

Blood binding. Substances may be bound to red blood cells or plasma components, or occur unbound in blood. Carbon monoxide, arsenic, organic mercury and hexavalent chromium have a high affinity for red blood cells, while inorganic mercury and trivalent chromium show a preference for plasma proteins. A number of other substances also bind to plasma proteins. Only the unbound fraction is available for filtration or diffusion into eliminating organs. Blood binding may therefore increase the residence time in the organism but decrease uptake by target organs.

Elimination

Elimination is the disappearance of a substance in the body. Elimination may involve excretion from the body or transformation to other substances not captured by a specific method of measurement. The rate of disappearance may be expressed by the elimination rate constant, biological half-time or clearance.

Concentration-time curve. The curve of concentration in blood (or plasma) versus time is a convenient way of describing uptake and disposition of a xenobiotic.

Area under the curve (AUC) is the integral of concentration in blood (plasma) over time. When metabolic saturation and other non-linear processes are absent, AUC is proportional to the absorbed amount of substance.

Biological half-time (or half-life) is the time needed after the end of exposure to reduce the amount in the organism to one-half. As it is often difficult to assess the total amount of a substance, measurements such as the concentration in blood (plasma) are used. The half-time should be used with caution, as it may change, for example, with dose and length of exposure. In addition, many substances have complex decay curves with several half-times.

Bioavailability is the fraction of an administered dose entering the systemic circulation. In the absence of presystemic clearance, or first-pass metabolism, the fraction is one. In oral exposure presystemic clearance may be due to metabolism within the gastrointestinal content, gut wall or liver. First-pass metabolism will reduce the systemic absorption of the substance and instead increase the absorption of metabolites. This may lead to a different toxicity pattern.

Clearance is the volume of blood (plasma) per unit time completely cleared of a substance. To distinguish from renal clearance, for example, the prefix total, metabolic or blood (plasma) is often added.

Intrinsic clearance is the capacity of endogenous enzymes to transform a substance, and is also expressed in volume per unit time. If the intrinsic clearance in an organ is much lower than the blood flow, the metabolism is said to be capacity limited. Conversely, if the intrinsic clearance is much higher than the blood flow, the metabolism is flow limited.

Excretion

Excretion is the exit of a substance and its biotransformation products from the organism.

Excretion in urine and bile. The kidneys are the most important excretory organs. Some substances, especially acids with high molecular weights, are excreted with bile. A fraction of biliary excreted substances may be reabsorbed in the intestines. This process, enterohepatic circulation, is common for conjugated substances following intestinal hydrolysis of the conjugate.

Other routes of excretion. Some substances, such as organic solvents and breakdown products such as acetone, are volatile enough so that a considerable fraction may be excreted by exhalation after inhalation. Small water-soluble molecules as well as fat-soluble ones are readily secreted to the foetus via the placenta, and into milk in mammals. For the mother, lactation can be a quantitatively important excretory pathway for persistent fat-soluble chemicals. The offspring may be secondarily exposed via the mother during pregnancy as well as during lactation. Water-soluble compounds may to some extent be excreted in sweat and saliva. These routes are generally of minor importance. However, as a large volume of saliva is produced and swallowed, saliva excretion may contribute to reabsorption of the compound. Some metals such as mercury are excreted by binding permanently to the sulphydryl groups of the keratin in the hair.

Toxicokinetic models

Mathematical models are important tools to understand and describe the uptake and disposition of foreign substances. Most models are compartmental, that is, the organism is represented by one or more compartments. A compartment is a chemically and physically theoretical volume in which the substance is assumed to distribute homogeneously and instantaneously. Simple models may be expressed as a sum of exponential terms, while more complicated ones require numerical procedures on a computer for their solution. Models may be subdivided in two categories, descriptive and physiological.

In descriptive models, fitting to measured data is performed by changing the numerical values of the model parameters or even the model structure itself. The model structure normally has little to do with the structure of the organism. Advantages of the descriptive approach are that few assumptions are made and that there is no need for additional data. A disadvantage of descriptive models is their limited usefulness for extrapolations.

Physiological models are constructed from physiological, anatomical and other independent data. The model is then refined and validated by comparison with experimental data. An advantage of physiological models is that they can be used for extrapolation purposes. For example, the influence of physical activity on the uptake and disposition of inhaled substances may be predicted from known physiological adjustments in ventilation and cardiac output. A disadvantage of physiological models is that they require a large amount of independent data.

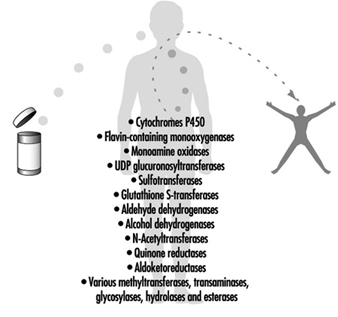

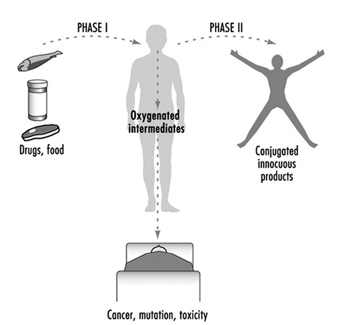

Biotransformation

Biotransformation is a process which leads to a metabolic conversion of foreign compounds (xenobiotics) in the body. The process is often referred to as metabolism of xenobiotics. As a general rule metabolism converts lipid-soluble xenobiotics to large, water- soluble metabolites that can be effectively excreted.

The liver is the main site of biotransformation. All xenobiotics taken up from the intestine are transported to the liver by a single blood vessel (vena porta). If taken up in small quantities a foreign substance may be completely metabolized in the liver before reaching the general circulation and other organs (first pass effect). Inhaled xenobiotics are distributed via the general circulation to the liver. In that case only a fraction of the dose is metabolized in the liver before reaching other organs.

Liver cells contain several enzymes that oxidize xenobiotics. This oxidation generally activates the compound—it becomes more reactive than the parent molecule. In most cases the oxidized metabolite is further metabolized by other enzymes in a second phase. These enzymes conjugate the metabolite with an endogenous substrate, so that the molecule becomes larger and more polar. This facilitates excretion.

Enzymes that metabolize xenobiotics are also present in other organs such as the lungs and kidneys. In these organs they may play specific and qualitatively important roles in the metabolism of certain xenobiotics. Metabolites formed in one organ may be further metabolized in a second organ. Bacteria in the intestine may also participate in biotransformation.

Metabolites of xenobiotics can be excreted by the kidneys or via the bile. They can also be exhaled via the lungs, or bound to endogenous molecules in the body.

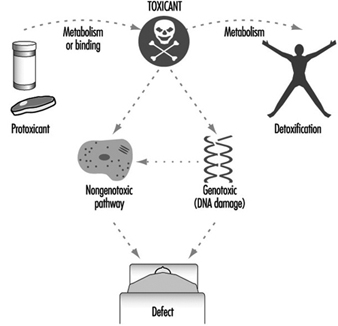

The relationship between biotransformation and toxicity is complex. Biotransformation can be seen as a necessary process for survival. It protects the organism against toxicity by preventing accumulation of harmful substances in the body. However, reactive intermediary metabolites may be formed in biotransformation, and these are potentially harmful. This is called metabolic activation. Thus, biotransformation may also induce toxicity. Oxidized, intermediary metabolites that are not conjugated can bind to and damage cellular structures. If, for example, a xenobiotic metabolite binds to DNA, a mutation can be induced (see “Genetic toxicology”). If the biotransformation system is overloaded, a massive destruction of essential proteins or lipid membranes may occur. This can result in cell death (see “Cellular injury and cellular death”).

Metabolism is a word often used interchangeably with biotransformation. It denotes chemical breakdown or synthesis reactions catalyzed by enzymes in the body. Nutrients from food, endogenous compounds, and xenobiotics are all metabolized in the body.

Metabolic activation means that a less reactive compound is converted to a more reactive molecule. This usually occurs during Phase 1 reactions.

Metabolic inactivation means that an active or toxic molecule is converted to a less active metabolite. This usually occurs during Phase 2 reactions. In certain cases an inactivated metabolite might be reactivated, for example by enzymatic cleavage.

Phase 1 reaction refers to the first step in xenobiotic metabolism. It usually means that the compound is oxidized. Oxidation usually makes the compound more water soluble and facilitates further reactions.

Cytochrome P450 enzymes are a group of enzymes that preferentially oxidize xenobiotics in Phase 1 reactions. The different enzymes are specialized for handling specific groups of xenobiotics with certain characteristics. Endogenous molecules are also substrates. Cytochrome P450 enzymes are induced by xenobiotics in a specific fashion. Obtaining induction data on cytochrome P450 can be informative about the nature of previous exposures (see “Genetic determinants of toxic response”).

Phase 2 reaction refers to the second step in xenobiotic meta- bolism. It usually means that the oxidized compound is conjugated with (coupled to) an endogenous molecule. This reaction increases the water solubility further. Many conjugated meta- bolites are actively excreted via the kidneys.

Transferases are a group of enzymes that catalyze Phase 2 reactions. They conjugate xenobiotics with endogenous compounds such as glutathione, amino acids, glucuronic acid or sulphate.

Glutathione is an endogenous molecule, a tripeptide, that is conjugated with xenobiotics in Phase 2 reactions. It is present in all cells (and in liver cells in high concentrations), and usually protects from activated xenobiotics. When glutathione is depleted, toxic reactions between activated xenobiotic metabolites and proteins, lipids or DNA may occur.

Induction means that enzymes involved in biotransformation are increased (in activity or amount) as a response to xenobiotic exposure. In some cases within a few days enzyme activity can be increased several fold. Induction is often balanced so that both Phase 1 and Phase 2 reactions are increased simultaneously. This may lead to a more rapid biotransformation and can explain tolerance. In contrast, unbalanced induction may increase toxicity.

Inhibition of biotransformation can occur if two xenobiotics are metabolized by the same enzyme. The two substrates have to compete, and usually one of the substrates is preferred. In that case the second substrate is not metabolized, or only slowly metabolized. As with induction, inhibition may increase as well as decrease toxicity.

Oxygen activation can be triggered by metabolites of certain xenobiotics. They may auto-oxidize under the production of activated oxygen species. These oxygen-derived species, which include superoxide, hydrogen peroxide and the hydroxyl radical, may damage DNA, lipids and proteins in cells. Oxygen activation is also involved in inflammatory processes.

Genetic variability between individuals is seen in many genes coding for Phase 1 and Phase 2 enzymes. Genetic variability may explain why certain individuals are more susceptible to toxic effects of xenobiotics than others.

Toxicokinetics

The human organism represents a complex biological system on various levels of organization, from the molecular-cellular level to the tissues and organs. The organism is an open system, exchanging matter and energy with the environment through numerous biochemical reactions in a dynamic equilibrium. The environment can be polluted, or contaminated with various toxicants.

Penetration of molecules or ions of toxicants from the work or living environment into such a strongly coordinated biological system can reversibly or irreversibly disturb normal cellular biochemical processes, or even injure and destroy the cell (see “Cellular injury and cellular death”).

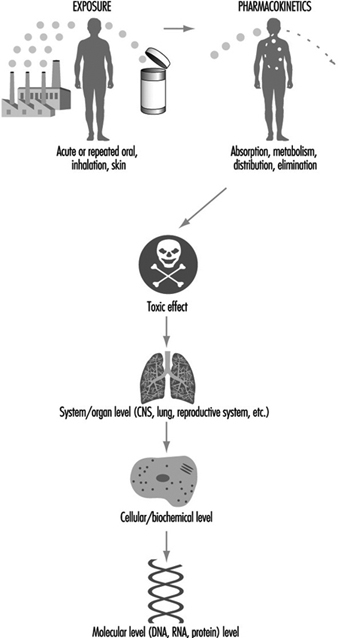

Penetration of a toxicant from the environment to the sites of its toxic effect inside the organism can be divided into three phases:

- The exposure phase encompasses all processes occurring between various toxicants and/or the influence on them of environmental factors (light, temperature, humidity, etc.). Chemical transformations, degradation, biodegradation (by micro-organisms) as well as disintegration of toxicants can occur.

- The toxicokinetic phase encompasses absorption of toxicants into the organism and all processes which follow transport by body fluids, distribution and accumulation in tissues and organs, biotransformation to metabolites and elimination (excretion) of toxicants and/or metabolites from the organism.

- The toxicodynamic phase refers to the interaction of toxicants (molecules, ions, colloids) with specific sites of action on or in- side the cells—receptors—ultimately producing a toxic effect.

Here we will focus our attention exclusively on the toxicokinetic processes inside the human organism following exposure to toxicants in the environment.

The molecules or ions of toxicants present in the environment will penetrate into the organism through the skin and mucosa, or the epithelial cells of the respiratory and gastrointestinal tracts, depending on the point of entry. That means molecules and ions of toxicants must penetrate through cellular membranes of these biological systems, as well as through an intricate system of endomembranes inside the cell.

All toxicokinetic and toxicodynamic processes occur on the molecular-cellular level. Numerous factors influence these processes and these can be divided into two basic groups:

- chemical constitution and physicochemical properties of toxicants

- structure of the cell especially properties and function of membranes around the cell and its interior organelles.

Physico-Chemical Properties of Toxicants

In 1854 the Russian toxicologist E.V. Pelikan started studies on the relation between the chemical structure of a substance and its biological activity—the structure activity relationship (SAR). Chemical structure directly determines physico-chemical properties, some of which are responsible for biological activity.

To define the chemical structure numerous parameters can be selected as descriptors, which can be divided into various groups:

1. Physico-chemical:

- general—melting point, boiling point, vapour pressure, dissociation constant (pKa)

- electric—ionization potential, dielectric constant, dipole moment, mass: charge ratio, etc.

- quantum chemical—atomic charge, bond energy, resonance energy, electron density, molecular reactivity, etc.

2. Steric: molecular volume, shape and surface area, substructure shape, molecular reactivity, etc.

3. Structural: number of bonds number of rings (in polycyclic compounds), extent of branching, etc.

For each toxicant it is necessary to select a set of descriptors related to a particular mechanism of activity. However, from the toxicokinetic point of view two parameters are of general importance for all toxicants:

- The Nernst partition coefficient (P) establishes the solubility of toxicant molecules in the two-phase octanol (oil)-water system, correlating to their lipo- or hydrosolubility. This parameter will greatly influence the distribution and accumulation of toxicant molecules in the organism.

- The dissociation constant (pKa) defines the degree of ionization (electrolytic dissociation) of molecules of a toxicant into charged cations and anions at a particular pH. This constant represents the pH at which 50% ionization is achieved. Molecules can be lipophilic or hydrophilic, but ions are soluble exclusively in the water of body fluids and tissues. Knowing pKa it is possible to calculate the degree of ionization of a substance for each pH using the Henderson-Hasselbach equation.

For inhaled dusts and aerosols, the particle size, shape, surface area and density also influence their toxicokinetics and toxico- dynamics.

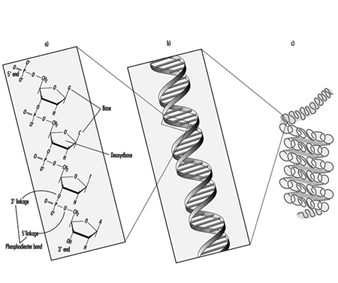

Structure and Properties of Membranes

The eukaryotic cell of human and animal organisms is encircled by a cytoplasmic membrane regulating the transport of substances and maintaining cell homeostasis. The cell organelles (nucleus, mitochondria) possess membranes too. The cell cytoplasm is compartmentalized by intricate membranous structures, the endo- plasmic reticulum and Golgi complex (endomembranes). All these membranes are structurally alike, but vary in the content of lipids and proteins.

The structural framework of membranes is a bilayer of lipid molecules (phospholipids, sphyngolipids, cholesterol). The backbone of a phospholipid molecule is glycerol with two of its -OH groups esterified by aliphatic fatty acids with 16 to 18 carbon atoms, and the third group esterified by a phosphate group and a nitrogenous compound (choline, ethanolamine, serine). In sphyngolipids, sphyngosine is the base.

The lipid molecule is amphipatic because it consists of a polar hydrophilic “head” (amino alcohol, phosphate, glycerol) and a non-polar twin “tail” (fatty acids). The lipid bilayer is arranged so that the hydrophilic heads constitute the outer and inner surface of membrane and lipophilic tails are stretched toward the membrane interior, which contains water, various ions and molecules.

Proteins and glycoproteins are inserted into the lipid bilayer (intrinsic proteins) or attached to the membrane surface (extrinsic proteins). These proteins contribute to the structural integrity of the membrane, but they may also perform as enzymes, carriers, pore walls or receptors.

The membrane represents a dynamic structure which can be disintegrated and rebuilt with a different proportion of lipids and proteins, according to functional needs.

Regulation of transport of substances into and out of the cell represents one of the basic functions of outer and inner membranes.

Some lipophilic molecules pass directly through the lipid bilayer. Hydrophilic molecules and ions are transported via pores. Membranes respond to changing conditions by opening or sealing certain pores of various sizes.

The following processes and mechanisms are involved in the transport of substances, including toxicants, through membranes:

- diffusion through lipid bilayer

- diffusion through pores

- transport by a carrier (facilitated diffusion).

Active processes:

- active transport by a carrier

- endocytosis (pinocytosis).

Diffusion

This represents the movement of molecules and ions through lipid bilayer or pores from a region of high concentration, or high electric potential, to a region of low concentration or potential (“downhill”). Difference in concentration or electric charge is the driving force influencing the intensity of the flux in both directions. In the equilibrium state, influx will be equal to efflux. The rate of diffusion follows Ficke’s law, stating that it is directly proportional to the available surface of membrane, difference in concentration (charge) gradient and characteristic diffusion coefficient, and inversely proportional to the membrane thickness.

Small lipophilic molecules pass easily through the lipid layer of membrane, according to the Nernst partition coefficient.

Large lipophilic molecules, water soluble molecules and ions will use aqueous pore channels for their passage. Size and stereoconfiguration will influence passage of molecules. For ions, besides size, the type of charge will be decisive. The protein molecules of pore walls can gain positive or negative charge. Narrow pores tend to be selective—negatively charged ligands will allow passage only for cations, and positively charged ligands will allow passage only for anions. With the increase of pore diameter hydrodynamic flow is dominant, allowing free passage of ions and molecules, according to Poiseuille’s law. This filtration is a consequence of the osmotic gradient. In some cases ions can penetrate through specific complex molecules—ionophores—which can be produced by micro-organisms with antibiotic effects (nonactin, valinomycin, gramacidin, etc.).

Facilitated or catalyzed diffusion

This requires the presence of a carrier in the membrane, usually a protein molecule (permease). The carrier selectively binds substances, resembling a substrate-enzyme complex. Similar molecules (including toxicants) can compete for the specific carrier until its saturation point is reached. Toxicants can compete for the carrier and when they are irreversibly bound to it the transport is blocked. The rate of transport is characteristic for each type of carrier. If transport is performed in both direction, it is called exchange diffusion.

Active transport

For transport of some substances vital for the cell, a special type of carrier is used, transporting against the concentration gradient or electric potential (“uphill”). The carrier is very stereospecific and can be saturated.

For uphill transport, energy is required. The necessary energy is obtained by catalytic cleavage of ATP molecules to ADP by the enzyme adenosine triphosphatase (ATP-ase).

Toxicants can interfere with this transport by competitive or non-competitive inhibition of the carrier or by inhibition of ATP-ase activity.

Endocytosis

Endocytosis is defined as a transport mechanism in which the cell membrane encircles material by enfolding to form a vesicle transporting it through the cell. When the material is liquid, the process is termed pinocytosis. In some cases the material is bound to a receptor and this complex is transported by a membrane vesicle. This type of transport is especially used by epithelial cells of the gastrointestinal tract, and cells of the liver and kidneys.

Absorption of Toxicants

People are exposed to numerous toxicants present in the work and living environment, which can penetrate into the human organism by three main portals of entry:

- via the respiratory tract by inhalation of polluted air

- via the gastrointestinal tract by ingestion of contaminated food, water and drinks

- through the skin by dermal, cutaneous penetration.

In the case of exposure in industry, inhalation represents the dominant way of entry of toxicants, followed by dermal penetration. In agriculture, pesticides exposure via dermal absorption is almost equal to cases of combined inhalation and dermal penetration. The general population is mostly exposed by ingestion of contaminated food, water and beverages, then by inhalation and less often by dermal penetration.

Absorption via the respiratory tract

Absorption in the lungs represents the main route of uptake for numerous airborne toxicants (gases, vapours, fumes, mists, smokes, dusts, aerosols, etc.).

The respiratory tract (RT) represents an ideal gas-exchange system possessing a membrane with a surface of 30m2 (expiration) to 100m2 (deep inspiration), behind which a network of about 2,000km of capillaries is located. The system, developed through evolution, is accommodated into a relatively small space (chest cavity) protected by ribs.

Anatomically and physiologically the RT can be divided into three compartments:

- the upper part of RT, or nasopharingeal (NP), starting at nose nares and extended to the pharynx and larynx; this part serves as an air-conditioning system

- the tracheo-bronchial tree (TB), encompassing numerous tubes of various sizes, which bring air to the lungs

- the pulmonary compartment (P), which consists of millions of alveoli (air-sacs) arranged in grapelike clusters.

Hydrophilic toxicants are easily absorbed by the epithelium of the nasopharingeal region. The whole epithelium of the NP and TB regions is covered by a film of water. Lipophilic toxicants are partially absorbed in the NP and TB, but mostly in the alveoli by diffusion through alveolo-capillary membranes. The absorption rate depends on lung ventilation, cardiac output (blood flow through lungs), solubility of toxicant in blood and its metabolic rate.

In the alveoli, gas exchange is carried out. The alveolar wall is made up of an epithelium, an interstitial framework of basement membrane, connective tissue and the capillary endothelium. The diffusion of toxicants is very rapid through these layers, which have a thickness of about 0.8 μm. In alveoli, toxicant is transferred from the air phase into the liquid phase (blood). The rate of absorption (air to blood distribution) of a toxicant depends on its concentration in alveolar air and the Nernst partition coefficient for blood (solubility coefficient).

In the blood the toxicant can be dissolved in the liquid phase by simple physical processes or bound to the blood cells and/or plasma constituents according to chemical affinity or by adsorption. The water content of blood is 75% and, therefore, hydrophilic gases and vapours show a high solubility in plasma (e.g., alcohols). Lipophilic toxicants (e.g., benzene) are usually bound to cells or macro-molecules such as albumen.

From the very beginning of exposure in the lungs, two opposite processes are occurring: absorption and desorption. The equilibrium between these processes depends on the concentration of toxicant in alveolar air and blood. At the onset of exposure the toxicant concentration in the blood is 0 and retention in blood is almost 100%. With continuation of exposure, an equilibrium between absorption and desorption is attained. Hydrophilic toxicants will rapidly attain equilibrium, and the rate of absorption depends on pulmonary ventilation rather than on blood flow. Lipophilic toxicants need a longer time to achieve equilibrium, and here the flow of unsaturated blood governs the rate of absorption.

Deposition of particles and aerosols in the RT depends on physical and physiological factors, as well as particle size. In short, the smaller the particle the deeper it will penetrate into the RT.

Relatively constant low retention of dust particles in the lungs of persons who are highly exposed (e.g., miners) suggests the existence of a very efficient system for the clearance of particles. In the upper part of the RT (tracheo-bronchial) a mucociliary blanket performs the clearance. In the pulmonary part, three different mechanisms are at work.: (1) mucociliary blanket, (2) phagocytosis and (3) direct penetration of particles through the alveolar wall.

The first 17 of the 23 branchings of the tracheo-bronchial tree possess ciliated epithelial cells. By their strokes these cilia constantly move a mucous blanket toward the mouth. Particles deposited on this mucociliary blanket will be swallowed in the mouth (ingestion). A mucous blanket also covers the surface of the alveolar epithelium, moving toward the mucociliary blanket. Additionally the specialized moving cells—phagocytes—engulf particles and micro-organisms in the alveoli and migrate in two possible directions:

- toward the mucociliary blanket, which transports them to the mouth

- through the intercellular spaces of the alveolar wall to the lymphatic system of the lungs; also particles can directly penetrate by this route.

Absorption via gastrointestinal tract

Toxicants can be ingested in the case of accidental swallowing, intake of contaminated food and drinks, or swallowing of particles cleared from the RT.

The entire alimentary channel, from oesophagus to anus, is basically built in the same way. A mucous layer (epithelium) is supported by connective tissue and then by a network of capillaries and smooth muscle. The surface epithelium of the stomach is very wrinkled to increase the absorption/secretion surface area. The intestinal area contains numerous small projections (villi), which are able to absorb material by “pumping in”. The active area for absorption in the intestines is about 100m2.

In the gastrointestinal tract (GIT) all absorption processes are very active:

- transcellular transport by diffusion through the lipid layer and/or pores of cell membranes, as well as pore filtration

- paracellular diffusion through junctions between cells

- facilitated diffusion and active transport

- endocytosis and the pumping mechanism of the villi.

Some toxic metal ions use specialized transport systems for essential elements: thallium, cobalt and manganese use the iron system, while lead appears to use the calcium system.

Many factors influence the rate of absorption of toxicants in various parts of the GIT:

- physico-chemical properties of toxicants, especially the Nernst partition coefficient and the dissociation constant; for particles, particle size is important—the smaller the size, the higher the solubility

- quantity of food present in the GIT (diluting effect)

- residence time in each part of the GIT (from a few minutes in the mouth to one hour in the stomach to many hours in the intestines

- the absorption area and absorption capacity of the epithelium

- local pH, which governs absorption of dissociated toxicants; in the acid pH of the stomach, non-dissociated acidic compounds will be more quickly absorbed

- peristalsis (movement of intestines by muscles) and local blood flow

- gastric and intestinal secretions transform toxicants into more or less soluble products; bile is an emulsifying agent producing more soluble complexes (hydrotrophy)

- combined exposure to other toxicants, which can produce synergistic or antagonistic effects in absorption processes

- presence of complexing/chelating agents

- the action of microflora of the RT (about 1.5kg), about 60 different bacterial species which can perform biotransformation of toxicants.

It is also necessary to mention the enterohepatic circulation. Polar toxicants and/or metabolites (glucuronides and other conjugates) are excreted with the bile into the duodenum. Here the enzymes of the microflora perform hydrolysis and liberated products can be reabsorbed and transported by the portal vein into the liver. This mechanism is very dangerous in the case of hepatotoxic substances, enabling their temporary accumulation in the liver.

In the case of toxicants biotransformed in the liver to less toxic or non-toxic metabolites, ingestion may represent a less dangerous portal of entry. After absorption in the GIT these toxicants will be transported by the portal vein to the liver, and there they can be partially detoxified by biotransformation.

Absorption through the skin (dermal, percutaneous)

The skin (1.8 m2 of surface in a human adult) together with the mucous membranes of the body orifices, covers the surface of the body. It represents a barrier against physical, chemical and biological agents, maintaining the body integrity and homeostasis and performing many other physiological tasks.

Basically the skin consists of three layers: epidermis, true skin (dermis) and subcutaneous tissue (hypodermis). From the toxicological point of view the epidermis is of most interest here. It is built of many layers of cells. A horny surface of flattened, dead cells (stratum corneum) is the top layer, under which a continuous layer of living cells (stratum corneum compactum) is located, followed by a typical lipid membrane, and then by stratum lucidum, stratum gramulosum and stratum mucosum. The lipid membrane represents a protective barrier, but in hairy parts of the skin, both hair follicles and sweat gland channels penetrate through it. Therefore, dermal absorption can occur by the following mechanisms:

- transepidermal absorption by diffusion through the lipid membrane (barrier), mostly by lipophilic substances (organic solvents, pesticides, etc.) and to a small extent by some hydrophilic substances through pores

- transfollicular absorption around the hair stalk into the hair follicle, bypassing the membrane barrier; this absorption occurs only in hairy areas of skin

- absorption via the ducts of sweat glands, which have a cross-sectional area of about 0.1to 1% of the total skin area (relative absorption is in this proportion)

- absorption through skin when injured mechanically, thermally, chemically or by skin diseases; here the skin layers, including lipid barrier, are disrupted and the way is open for toxicants and harmful agents to enter.

The rate of absorption through the skin will depend on many factors:

- concentration of toxicant, type of vehicle (medium), presence of other substances

- water content of skin, pH, temperature, local blood flow, perspiration, surface area of contaminated skin, thickness of skin

- anatomical and physiological characteristics of the skin due to sex, age, individual variations, differences occurring in various ethnic groups and races, etc.

Transport of Toxicants by Blood and Lymph

After absorption by any of these portals of entry, toxicants will reach the blood, lymph or other body fluids. The blood represents the major vehicle for transport of toxicants and their metabolites.

Blood is a fluid circulating organ, transporting necessary oxygen and vital substances to the cells and removing waste products of metabolism. Blood also contains cellular components, hormones, and other molecules involved in many physiological functions. Blood flows inside a relatively well closed, high-pressure circulatory system of blood vessels, pushed by the activity of the heart. Due to high pressure, leakage of fluid occurs. The lymphatic system represents the drainage system, in the form of a fine mesh of small, thin-walled lymph capillaries branching through the soft tissues and organs.

Blood is a mixture of a liquid phase (plasma, 55%) and solid blood cells (45%). Plasma contains proteins (albumins, globulins, fibrinogen), organic acids (lactic, glutamic, citric) and many other substances (lipids, lipoproteins, glycoproteins, enzymes, salts, xenobiotics, etc.). Blood cell elements include erythrocytes (Er), leukocytes, reticulocytes, monocytes, and platelets.

Toxicants are absorbed as molecules and ions. Some toxicants at blood pH form colloid particles as a third form in this liquid. Molecules, ions and colloids of toxicants have various possibilities for transport in blood:

- to be physically or chemically bound to the blood elements, mostly Er

- to be physically dissolved in plasma in a free state

- to be bound to one or more types of plasma proteins, complexed with the organic acids or attached to other fractions of plasma.

Most of the toxicants in blood exist partially in a free state in plasma and partially bound to erythrocytes and plasma constituents. The distribution depends on the affinity of toxicants to these constituents. All fractions are in a dynamic equilibrium.

Some toxicants are transported by the blood elements—mostly by erythrocytes, very rarely by leukocytes. Toxicants can be adsorbed on the surface of Er, or can bind to the ligands of stroma. If they penetrate into Er they can bind to the haem (e.g. carbon monoxide and selenium) or to the globin (Sb111, Po210). Some toxicants transported by Er are arsenic, cesium, thorium, radon, lead and sodium. Hexavalent chromium is exclusively bound to the Er and trivalent chromium to the proteins of plasma. For zinc, competition between Er and plasma occurs. About 96% of lead is transported by Er. Organic mercury is mostly bound to Er and inorganic mercury is carried mostly by plasma albumin. Small fractions of beryllium, copper, tellurium and uranium are carried by Er.

The majority of toxicants are transported by plasma or plasma proteins. Many electrolytes are present as ions in an equilibrium with non-dissociated molecules free or bound to the plasma fractions. This ionic fraction of toxicants is very diffusible, penetrating through the walls of capillaries into tissues and organs. Gases and vapours can be dissolved in the plasma.

Plasma proteins possess a total surface area of about 600to 800km2 offered for absorption of toxicants. Albumin molecules possess about 109 cationic and 120 anionic ligands at the disposal of ions. Many ions are partially carried by albumin (e.g., copper, zinc and cadmium), as are such compounds as dinitro- and ortho-cresols, nitro- and halogenated derivatives of aromatic hydrocarbons, and phenols.

Globulin molecules (alpha and beta) transport small molecules of toxicants as well as some metallic ions (copper, zinc and iron) and colloid particles. Fibrinogen shows affinity for certain small molecules. Many types of bonds can be involved in binding of toxicants to plasma proteins: Van der Waals forces, attraction of charges, association between polar and non-polar groups, hydrogen bridges, covalent bonds.

Plasma lipoproteins transport lipophilic toxicants such as PCBs. The other plasma fractions serve as a transport vehicle too. The affinity of toxicants for plasma proteins suggests their affinity for proteins in tissues and organs during distribution.

Organic acids (lactic, glutaminic, citric) form complexes with some toxicants. Alkaline earths and rare earths, as well as some heavy elements in the form of cations, are complexed also with organic oxy- and amino acids. All these complexes are usually diffusible and easily distributed in tissues and organs.

Physiologically chelating agents in plasma such as transferrin and metallothionein compete with organic acids and amino acids for cations to form stable chelates.

Diffusible free ions, some complexes and some free molecules are easily cleared from the blood into tissues and organs. The free fraction of ions and molecules is in a dynamic equilibrium with the bound fraction. The concentration of a toxicant in blood will govern the rate of its distribution into tissues and organs, or its mobilization from them into the blood.

Distribution of Toxicants in the Organism

The human organism can be divided into the following compartments. (1) internal organs, (2) skin and muscles, (3) adipose tissues, (4) connective tissue and bones. This classification is mostly based on the degree of vascular (blood) perfusion in a decreasing order. For example internal organs (including the brain), which represent only 12% of the total body weight, receive about 75% of the total blood volume. On the other hand, connective tissues and bones (15% of total body weight) receive only one per cent of the total blood volume.

The well-perfused internal organs generally achieve the highest concentration of toxicants in the shortest time, as well as an equilibrium between blood and this compartment. The uptake of toxicants by less perfused tissues is much slower, but retention is higher and duration of stay much longer (accumulation) due to low perfusion.

Three components are of major importance for the intracellular distribution of toxicants: content of water, lipids and proteins in the cells of various tissues and organs. The above-mentioned order of compartments also follows closely a decreasing water content in their cells. Hydrophilic toxicants will be more rapidly distributed to the body fluids and cells with high water content, and lipophilic toxicants to cells with higher lipid content (fatty tissue).

The organism possesses some barriers which impair penetration of some groups of toxicants, mostly hydrophilic, to certain organs and tissues, such as:

- the blood-brain barrier (cerebrospinal barrier), which restricts penetration of large molecules and hydrophilic toxicants to the brain and CNS; this barrier consists of a closely joined layer of endothelial cells; thus, lipophilic toxicants can penetrate through it

- the placental barrier, which has a similar effect on penetration of toxicants into the foetus from the blood of the mother

- the histo-haematologic barrier in the walls of capillaries, which is permeable for small- and intermediate-sized molecules, and for some larger molecules, as well as ions.

As previously noted only the free forms of toxicants in plasma (molecules, ions, colloids) are available for penetration through the capillary walls participating in distribution. This free fraction is in a dynamic equilibrium with the bound fraction. Concentration of toxicants in blood is in a dynamic equilibrium with their concentration in organs and tissues, governing retention (accumulation) or mobilization from them.

The condition of the organism, functional state of organs (especially neuro-humoral regulation), hormonal balance and other factors play a role in distribution.

Retention of toxicant in a particular compartment is generally temporary and redistribution into other tissues can occur. Retention and accumulation is based on the difference between the rates of absorption and elimination. The duration of retention in a compartment is expressed by the biological half-life. This is the time interval in which 50% of the toxicant is cleared from the tissue or organ and redistributed, translocated or eliminated from the organism.

Biotransformation processes occur during distribution and retention in various organs and tissues. Biotransformation produces more polar, more hydrophilic metabolites, which are more easily eliminated. A low rate of biotransformation of a lipophilic toxicant will generally cause its accumulation in a compartment.

The toxicants can be divided into four main groups according to their affinity, predominant retention and accumulation in a particular compartment:

- Toxicants soluble in the body fluids are uniformly distributed according to the water content of compartments. Many monovalent cations (e.g., lithium, sodium, potassium, rubidium) and some anions (e.g., chlorine, bromine), are distributed according to this pattern.

- Lipophilic toxicants show a high affinity for lipid-rich organs (CNS) and tissues (fatty, adipose).

- Toxicants forming colloid particles are then trapped by specialized cells of the reticuloendothelial system (RES) of organs and tissues. Tri- and quadrivalent cations (lanthanum, cesium, hafnium) are distributed in the RES of tissues and organs.

- Toxicants showing a high affinity for bones and connective tissue (osteotropic elements, bone seekers) include divalent cations (e.g., calcium, barium, strontium, radon, beryllium, aluminium, cadmium, lead).

Accumulation in lipid-rich tissues

The “standard man” of 70kg body weight contains about 15% of body weight in the form of adipose tissue, increasing with obesity to 50%. However, this lipid fraction is not uniformly distributed. The brain (CNS) is a lipid-rich organ, and peripheral nerves are wrapped with a lipid-rich myelin sheath and Schwann cells. All these tissues offer possibilities for accumulation of lipophilic toxicants.

Numerous non-electrolytes and non-polar toxicants with a suitable Nernst partition coefficient will be distributed to this compartment, as well as numerous organic solvents (alcohols, aldehydes, ketones, etc.), chlorinated hydrocarbons (including organochlorine insecticides such as DDT), some inert gases (radon), etc.

Adipose tissue will accumulate toxicants due to its low vascularization and lower rate of biotransformation. Here accumulation of toxicants may represent a kind of temporary “neutralization” because of lack of targets for toxic effect. However, potential danger for the organism is always present due to the possibility of mobilization of toxicants from this compartment back to the circulation.

Deposition of toxicants in the brain (CNS) or lipid-rich tissue of the myelin sheath of the peripheral nervous system is very dangerous. The neurotoxicants are deposited here directly next to their targets. Toxicants retained in lipid-rich tissue of the endocrine glands can produce hormonal disturbances. Despite the blood-brain barrier, numerous neurotoxicants of a lipophilic nature reach the brain (CNS): anaesthetics, organic solvents, pesticides, tetraethyl lead, organomercurials, etc.

Retention in the reticuloendothelial system

In each tissue and organ a certain percentage of cells is specialized for phagocytic activity, engulfing micro-organisms, particles, colloid particles, and so on. This system is called the reticuloendothelial system (RES), comprising fixed cells as well as moving cells (phagocytes). These cells are present in non-active form. An increase of the above-mentioned microbes and particles will activate the cells up to a saturation point.

Toxicants in the form of colloids will be captured by the RES of organs and tissues. Distribution depends on the colloid particle size. For larger particles, retention in the liver will be favoured. With smaller colloid particles, more or less uniform distribution will occur between the spleen, bone marrow and liver. Clearance of colloids from the RES is very slow, although small particles are cleared relatively more quickly.

Accumulation in bones

About 60 elements can be identified as osteotropic elements, or bone seekers.

Osteotropic elements can be divided into three groups:

- Elements representing or replacing physiological constituents of the bone. Twenty such elements are present in higher quantities. The others appear in trace quantities. Under conditions of chronic exposure, toxic metals such as lead, aluminium and mercury can also enter the mineral matrix of bone cells.

- Alkaline earths and other elements forming cations with an ionic diameter similar to that of calcium are exchangeable with it in bone mineral. Also, some anions are exchangeable with anions (phosphate, hydroxyl) of bone mineral.

- Elements forming microcolloids (rare earths) may be adsorbed on the surface of bone mineral.

The skeleton of a standard man accounts for 10to 15% of the total body weight, representing a large potential storage depot for osteotropic toxicants. Bone is a highly specialized tissue consisting by volume of 54% minerals and 38% organic matrix. The mineral matrix of bone is hydroxyapatite, Ca10(PO4)6(OH)2 , in which the ratio of Ca to P is about 1.5 to one. The surface area of mineral available for adsorption is about 100m2 per g of bone.

Metabolic activity of the bones of the skeleton can be divided in two categories:

- active, metabolic bone, in which processes of resorption and new bone formation, or remodelling of existing bone, are very extensive

- stable bone with a low rate of remodelling or growth.

In the fetus, infant and young child metabolic bone (see “available skeleton”) represents almost 100% of the skeleton. With age this percentage of metabolic bone decreases. Incorporation of toxicants during exposure appears in the metabolic bone and in more slowly turning-over compartments.

Incorporation of toxicants into bone occurs in two ways:

- For ions, an ion exchange occurs with physiologically present calcium cations, or anions (phosphate, hydroxyl).

- For toxicants forming colloid particles, adsorption on the mineral surface occurs.

Ion-exchange reactions

The bone mineral, hydroxyapatite, represents a complex ion- exchange system. Calcium cations can be exchanged by various cations. The anions present in bone can also be exchanged by anions: phosphate with citrates and carbonates, hydroxyl with fluorine. Ions which are not exchangeable can be adsorbed on the mineral surface. When toxicant ions are incorporated in the mineral, a new layer of mineral can cover the mineral surface, burying toxicant into the bone structure. Ion exchange is a reversible process, depending on the concentration of ions, pH and fluid volume. Thus, for example, an increase of dietary calcium may decrease the deposition of toxicant ions in the lattice of minerals. It has been mentioned that with age the percentage of metabolic bone is decreased, although ion exchange continues. With ageing, bone mineral resorption occurs, in which bone density actually decreases. At this point, toxicants in bone may be released (e.g., lead).

About 30% of the ions incorporated into bone minerals are loosely bound and can be exchanged, captured by natural chelating agents and excreted, with a biological half-life of 15 days. The other 70% is more firmly bound. Mobilization and excretion of this fraction shows a biological half-life of 2.5 years and more depending on bone type (remodelling processes).

Chelating agents (Ca-EDTA, penicillamine, BAL, etc.) can mobilize considerable quantities of some heavy metals, and their excretion in urine greatly increased.

Colloid adsorption

Colloid particles are adsorbed as a film on the mineral surface (100m2 per g) by Van der Waals forces or chemisorption. This layer of colloids on the mineral surfaces is covered with the next layer of formed minerals, and the toxicants are more buried into the bone structure. The rate of mobilization and elimination depends on remodelling processes.

Accumulation in hair and nails

The hair and nails contain keratin, with sulphydryl groups able to chelate metallic cations such as mercury and lead.

Distribution of toxicant inside the cell

Recently the distribution of toxicants, especially some heavy metals, within cells of tissues and organs has become of importance. With ultracentrifugation techniques, various fractions of the cell can be separated to determine their content of metal ions and other toxicants.

Animal studies have revealed that after penetration into the cell, some metal ions are bound to a specific protein, metallothionein. This low molecular weight protein is present in the cells of liver, kidney and other organs and tissues. Its sulphydryl groups can bind six ions per molecule. Increased presence of metal ions induces the biosynthesis of this protein. Ions of cadmium are the most potent inducer. Metallothionein serves also to maintain homeostasis of vital copper and zinc ions. Metallothionein can bind zinc, copper, cadmium, mercury, bismuth, gold, cobalt and other cations.

Biotransformation and Elimination of Toxicants

During retention in cells of various tissues and organs, toxicants are exposed to enzymes which can biotransform (metabolize) them, producing metabolites. There are many pathways for the elimination of toxicants and/or metabolites: by exhaled air via the lungs, by urine via the kidneys, by bile via the GIT, by sweat via the skin, by saliva via the mouth mucosa, by milk via the mammary glands, and by hair and nails via normal growth and cell turnover.

The elimination of an absorbed toxicant depends on the portal of entry. In the lungs the absorption/desorption process starts immediately and toxicants are partially eliminated by exhaled air. Elimination of toxicants absorbed by other paths of entry is prolonged and starts after transport by blood, eventually being completed after distribution and biotransformation. During absorption an equilibrium exists between the concentrations of a toxicant in the blood and in tissues and organs. Excretion decreases toxicant blood concentration and may induce mobilization of a toxicant from tissues into blood.

Many factors can influence the elimination rate of toxicants and their metabolites from the body:

- physico-chemical properties of toxicants, especially the Nernst partition coefficient (P), dissociation constant (pKa), polarity, molecular structure, shape and weight

- level of exposure and time of post-exposure elimination

- portal of entry

- distribution in the body compartments, which differ in exchange rate with the blood and blood perfusion

- rate of biotransformation of lipophilic toxicants to more hydrophilic metabolites

- overall health condition of organism and, especially, of excretory organs (lungs, kidneys, GIT, skin, etc.)

- presence of other toxicants which can interfere with elimination.

Here we distinguish two groups of compartments: (1) the rapid-exchange system— in these compartments, tissue concentration of toxicant is similar to that of the blood; and (2) the slow-exchange system, where tissue concentration of toxicant is higher than in blood due to binding and accumulation—adipose tissue, skeleton and kidneys can temporarily retain some toxicants, e.g., arsenic and zinc.

A toxicant can be excreted simultaneously by two or more excretion routes. However, usually one route is dominant.

Scientists are developing mathematical models describing the excretion of a particular toxicant. These models are based on the movement from one or both compartments (exchange systems), biotransformation and so on.

Elimination by exhaled air via lungs

Elimination via the lungs (desorption) is typical for toxicants with high volatility (e.g., organic solvents). Gases and vapours with low solubility in blood will be quickly eliminated this way, whereas toxicants with high blood solubility will be eliminated by other routes.

Organic solvents absorbed by the GIT or skin are excreted partially by exhaled air in each passage of blood through the lungs, if they have a sufficient vapour pressure. The Breathalyser test used for suspected drunk drivers is based on this fact. The concentration of CO in exhaled air is in equilibrium with the CO-Hb blood content. The radioactive gas radon appears in exhaled air due to the decay of radium accumulated in the skeleton.

Elimination of a toxicant by exhaled air in relation to the post-exposure period of time usually is expressed by a three-phase curve. The first phase represents elimination of toxicant from the blood, showing a short half-life. The second, slower phase represents elimination due to exchange of blood with tissues and organs (quick-exchange system). The third, very slow phase is due to exchange of blood with fatty tissue and skeleton. If a toxicant is not accumulated in such compartments, the curve will be two-phase. In some cases a four-phase curve is also possible.

Determination of gases and vapours in exhaled air in the post-exposure period is sometimes used for evaluation of exposures in workers.

Renal excretion

The kidney is an organ specialized in the excretion of numerous water-soluble toxicants and metabolites, maintaining homeostasis of the organism. Each kidney possesses about one million nephrons able to perform excretion. Renal excretion represents a very complex event encompassing three different mechanisms:

- glomerular filtration by Bowman’s capsule

- active transport in the proximal tubule

- passive transport in the distal tubule.

Excretion of a toxicant via the kidneys to urine depends on the Nernst partition coefficient, dissociation constant and pH of urine, molecular size and shape, rate of metabolism to more hydrophilic metabolites, as well as health status of the kidneys.

The kinetics of renal excretion of a toxicant or its metabolite can be expressed by a two-, three- or four-phase excretion curve, depending on the distribution of the particular toxicant in various body compartments differing in the rate of exchange with the blood.

Saliva

Some drugs and metallic ions can be excreted through the mucosa of the mouth by saliva—for example, lead (“lead line”), mercury, arsenic, copper, as well as bromides, iodides, ethyl alcohol, alkaloids, and so on. The toxicants are then swallowed, reaching the GIT, where they can be reabsorbed or eliminated by faeces.

Sweat

Many non-electrolytes can be partially eliminated via skin by sweat: ethyl alcohol, acetone, phenols, carbon disulphide and chlorinated hydrocarbons.

Milk

Many metals, organic solvents and some organochlorine pesticides (DDT) are secreted via the mammary gland in mother’s milk. This pathway can represent a danger for nursing infants.

Hair

Analysis of hair can be used as an indicator of homeostasis of some physiological substances. Also exposure to some toxicants, especially heavy metals, can be evaluated by this kind of bioassay.

Elimination of toxicants from the body can be increased by:

- mechanical translocation via gastric lavage, blood transfusion or dialysis

- creating physiological conditions which mobilize toxicants by diet, change of hormonal balance, improving renal function by application of diuretics

- administration of complexing agents (citrates, oxalates, salicilates, phosphates), or chelating agents (Ca-EDTA, BAL, ATA, DMSA, penicillamine); this method is indicated only in persons under strict medical control. Application of chelating agents is often used for elimination of heavy metals from the body of exposed workers in the course of their medical treatment. This method is also used for evaluation of total body burden and level of past exposure.

Exposure Determinations

Determination of toxicants and metabolites in blood, exhaled air, urine, sweat, faeces and hair is more and more used for evaluation of human exposure (exposure tests) and/or evaluation of the degree of intoxication. Therefore biological exposure limits (Biological MAC Values, Biological Exposure Indices—BEI) have recently been established. These bioassays show “internal exposure” of the organism, that is, total exposure of the body in both the work and living environments by all portals of entry (see “Toxicology test methods: Biomarkers”).

Combined Effects Due to Multiple Exposure