Ergonomic Aspects of Human-Computer Interaction

Introduction

The development of effective interfaces to computer systems is the fundamental objective of research on human-computer interactions.

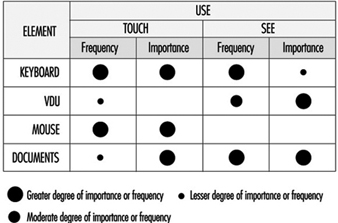

An interface can be defined as the sum of the hardware and software components through which a system is operated and users informed of its status. The hardware components include data entry and pointing devices (e.g., keyboards, mice), information-presentation devices (e.g., screens, loudspeakers), and user manuals and documentation. The software components include menu commands, icons, windows, information feedback, navigation systems and messages and so on. An interface’s hardware and software components may be so closely linked as to be inseparable (e.g., function keys on keyboards). The interface includes everything the user perceives, understands and manipulates while interacting with the computer (Moran 1981). It is therefore a crucial determinant of the human-machine relation.

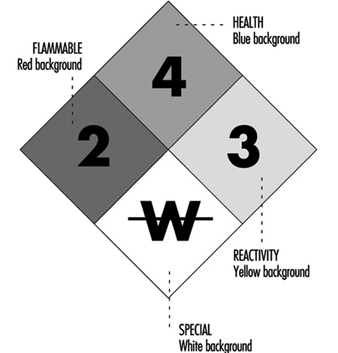

Research on interfaces aims at improving interface utility, accessibility, performance and safety, and usability. For these purposes, utility is defined with reference to the task to be performed. A useful system contains the necessary functions for the completion of tasks users are asked to perform (e.g., writing, drawing, calculations, programming). Accessibility is a measure of an interface’s ability to allow several categories of users—particularly individuals with handicaps, and those working in geographically isolated areas, in constant movement or having both hands occupied—to use the system to perform their activities. Performance, considered here from a human rather than a technical viewpoint, is a measure of the degree to which a system improves the efficiency with which users perform their work. This includes the effect of macros, menu short-cuts and intelligent software agents. The safety of a system is defined by the extent to which an interface allows users to perform their work free from the risk of human, equipment, data, or environmental accidents or losses. Finally, usability is defined as the ease with which a system is learned and used. By extension, it also includes system utility and performance, defined above.

Elements of Interface Design

Since the invention of shared-time operating systems in 1963, and especially since the arrival of the microcomputer in 1978, the development of human-computer interfaces has been explosive (see Gaines and Shaw 1986 for a history). The stimulus for this development has been essentially driven by three factors acting simultaneously:

First, the very rapid evolution of computer technology, a result of advances in electrical engineering, physics and computer science, has been a major determinant of user interface development. It has resulted in the appearance of computers of ever-increasing power and speed, with high memory capacities, high-resolution graphics screens, and more natural pointing devices allowing direct manipulation (e.g., mice, trackballs). These technologies were also responsible for the emergence of microcomputing. They were the basis for the character-based interfaces of the 1960s and 1970s, graphical interfaces of the late 1970s, and multi- and hyper-media interfaces appearing since the mid-1980s based on virtual environments or using a variety of alternate-input recognition technologies (e.g., voice-, handwriting-, and movement-detection). Considerable research and development has been conducted in recent years in these areas (Waterworth and Chignel 1989; Rheingold 1991). Concomitant with these advances was the development of more advanced software tools for interface design (e.g., windowing systems, graphical object libraries, prototyping systems) that greatly reduce the time required to develop interfaces.

Second, users of computer systems play a large role in the development of effective interfaces. There are three reasons for this. First, current users are not engineers or scientists, in contrast to users of the first computers. They therefore demand systems that can be easily learned and used. Second, the age, sex, language, culture, training, experience, skill, motivation and interest of individual users is quite varied. Interfaces must therefore be more flexible and better able to adapt to a range of needs and expectations. Finally, users are employed in a variety of economic sectors and perform a quite diverse spectrum of tasks. Interface developers must therefore constantly reassess the quality of their interfaces.

Lastly, intense market competition and increased safety expectations favour the development of better interfaces. These preoccupations are driven by two sets of partners: on the one hand, software producers who strive to reduce their costs while maintaining product distinctiveness that furthers their marketing goals, and on the other, users for whom the software is a means of offering competitive products and services to clients. For both groups, effective interfaces offer a number of advantages:

For software producers:

- better product image

- increased demand for products

- shorter training times

- lower after-sales service requirements

- solid base upon which to develop a product line

- reduction of the risk of errors and accidents

- reduction of documentation.

For users:

- shorter learning phase

- increased general applicability of skills

- improved use of the system

- increased autonomy using the system

- reduction of the time needed to execute a task

- reduction in the number of errors

- increased satisfaction.

Effective interfaces can significantly improve the health and productivity of users at the same time as they improve the quality and reduce the cost of their training. This, however, requires basing interface design and evaluation on ergonomic principles and practice standards, be they guidelines, corporate standards of major system manufacturers or international standards. Over the years, an impressive body of ergonomic principles and guidelines related to interface design has accumulated (Scapin 1986; Smith and Mosier 1986; Marshall, Nelson and Gardiner 1987; Brown 1988). This multidisciplinary corpus covers all aspects of character-mode and graphical interfaces, as well as interface evaluation criteria. Although its concrete application occasionally poses some problems—for example, imprecise terminology, inadequate information on usage conditions, inappropriate presentation—it remains a valuable resource for interface design and evaluation.

In addition, the major software manufacturers have developed their own guidelines and internal standards for interface design. These guidelines are available in the following documents:

- Apple Human Interface Guidelines (1987)

- Open Look (Sun 1990)

- OSF/Motif Style Guide (1990)

- IBM Common User Access guide to user interface design (1991)

- IBM Advanced Interface Design Reference (1991)

- The Windows interface: An application design guide (Microsoft 1992)

These guidelines attempt to simplify interface development by mandating a minimal level of uniformity and consistency between interfaces used on the same computer platform. They are precise, detailed, and quite comprehensive in several respects, and offer the additional advantages of being well-known, accessible and widely used. They are the de facto design standards used by developers, and are, for this reason, indispensable.

Furthermore, the International Organization for Standardization (ISO) standards are also very valuable sources of information about interface design and evaluation. These standards are primarily concerned with ensuring uniformity across interfaces, regardless of platforms and applications. They have been developed in collaboration with national standardization agencies, and after extensive discussion with researchers, developers and manufacturers. The main ISO interface design standard is ISO 9241, which describes ergonomic requirements for visual display units. It is comprised of 17 parts. For example, parts 14, 15, 16 and 17 discuss four types of human-computer dialogue—menus, command languages, direct manipulation, and forms. ISO standards should take priority over other design principles and guidelines. The following sections discuss the principles which should condition interface design.

A Design Philosophy Focused on the User

Gould and Lewis (1983) have proposed a design philosophy focused on the video display unit user. Its four principles are:

- Immediate and continuous attention to users. Direct contact with users is maintained, in order to better understand their characteristics and tasks.

- Integrated design. All aspects of usability (e.g., interface, manuals, help systems) are developed in parallel and placed under centralized control.

- Immediate and continuous evaluation by users. Users test the interfaces or prototypes early on in the design phase, under simulated work conditions. Performance and reactions are measured quantitatively and qualitatively.

- Iterative design. The system is modified on the basis of the results of the evaluation, and the evaluation cycle started again.

These principles are explained in further detail in Gould (1988). Very relevant when they were first published in 1985, fifteen years later they remain so, due to the inability to predict the effectiveness of interfaces in the absence of user testing. These principles constitute the heart of user-based development cycles proposed by several authors in recent years (Gould 1988; Mantei and Teorey 1989; Mayhew 1992; Nielsen 1992; Robert and Fiset 1992).

The rest of this article will analyse five stages in the development cycle that appear to determine the effectiveness of the final interface.

Task Analysis

Ergonomic task analysis is one of the pillars of interface design. Essentially, it is the process by which user responsibilities and activities are elucidated. This in turn allows interfaces compatible with the characteristics of users’ tasks to be designed. There are two facets to any given task:

- The nominal task, corresponding to the organization’s formal definition of the task. This includes objectives, procedures, quality control, standards and tools.

- The real task, corresponding to the users’ decisions and behaviours necessary for the execution of the nominal task.

The gap between nominal and real tasks is inevitable and results from the failure of nominal tasks to take into account variations and unforeseen circumstances in the work flow, and differences in users’ mental representations of their work. Analysis of the nominal task is insufficient for a full understanding of users’ activities.

Activity analysis examines elements such as work objectives, the type of operations performed, their temporal organization (sequential, parallel) and frequency, the operational modes relied upon, decisions, sources of difficulty, errors and recovery modes. This analysis reveals the different operations performed to accomplish the task (detection, searching, reading, comparing, evaluating, deciding, estimating, anticipating), the entities manipulated (e.g., in process control, temperature, pressure, flow-rate, volume) and the relation between operators and entities. The context in which the task is executed conditions these relations. These data are essential for the definition and organization of the future system’s features.

At its most basic, task analysis is composed of data collection, compilation and analysis. It may be performed before, during or after computerization of the task. In all cases, it provides essential guidelines for interface design and evaluation. Task analysis is always concerned with the real task, although it may also study future tasks through simulation or prototype testing. When performed prior to computerization, it studies “external tasks” (i.e., tasks external to the computer) performed with the existing work tools (Moran 1983). This type of analysis is useful even when computerization is expected to result in major modification of the task, since it elucidates the nature and logic of the task, work procedures, terminology, operators and tasks, work tools and sources of difficulty. In so doing, it provides the data necessary for task optimization and computerization.

Task analysis performed during task computerization focuses on “internal tasks”, as performed and represented by the computer system. System prototypes are used to collect data at this stage. The focus is on the same points examined in the previous stage, but from the point of view of the computerization process.

Following task computerization, task analysis also studies internal tasks, but analysis now focuses on the final computer system. This type of analysis is often performed to evaluate existing interfaces or as part of the design of new ones.

Hierarchical task analysis is a common method in cognitive ergonomics that has proven very useful in a wide variety of fields, including interface design (Shepherd 1989). It consists of the division of tasks (or main objectives) into sub-tasks, each of which can be further subdivided, until the required level of detail is attained. If data is collected directly from users (e.g., through interviews, vocalization), hierarchical division can provide a portrait of users’ mental mapping of a task. The results of the analysis can be represented by a tree diagram or table, each format having its advantages and disadvantages.

User Analysis

The other pillar of interface design is the analysis of user characteristics. The characteristics of interest may relate to user age, sex, language, culture, training, technical or computer-related knowledge, skills or motivation. Variations in these individual factors are responsible for differences within and between groups of users. One of the key tenets of interface design is therefore that there is no such thing as the average user. Instead, different groups of users should be identified and their characteristics understood. Representatives of each group should be encouraged to participate in the interface design and evaluation processes.

On the other hand, techniques from psychology, ergonomics and cognitive engineering can be used to reveal information on user characteristics related to perception, memory, cognitive mapping, decision-making and learning (Wickens 1992). It is clear that the only way to develop interfaces that are truly compatible with users is to take into account the effect of differences in these factors on user capacities, limits and ways of operating.

Ergonomic studies of interfaces have focused almost exclusively on users’ perceptual, cognitive and motor skills, rather than on affective, social or attitudinal factors, although work in the latter fields has become more popular in recent years. (For an integrated view of humans as information-processing systems see Rasmussen 1986; for a review of user-related factors to consider when designing interfaces see Thimbleby 1990 and Mayhew 1992). The following paragraphs review the four main user-related characteristics that should be taken into account during interface design.

Mental representation

The mental models users construct of the systems they use reflect the manner in which they receive and understand these systems. These models therefore vary as a function of users’ knowledge and experience (Hutchins 1989). In order to minimize the learning curve and facilitate system use, the conceptual model upon which a system is based should be similar to users’ mental representation of it. It should be recognized however that these two models are never identical. The mental model is characterized by the very fact that it is personal (Rich 1983), incomplete, variable from one part of the system to another, possibly in error on some points and in constant evolution. It plays a minor role in routine tasks but a major one in non-routine ones and during diagnosis of problems (Young 1981). In the latter cases, users will perform poorly in the absence of an adequate mental model. The challenge for interface designers is to design systems whose interaction with users will induce the latter to form mental models similar to the system’s conceptual model.

Learning

Analogy plays a large role in user learning (Rumelhart and Norman 1983). For this reason, the use of appropriate analogies or metaphors in the interface facilitates learning, by maximizing the transfer of knowledge from known situations or systems. Analogies and metaphors play a role in many parts of the interface, including the names of commands and menus, symbols, icons, codes (e.g., shape, colour) and messages. When pertinent, they greatly contribute to rendering interfaces natural and more transparent to users. On the other hand, when they are irrelevant, they can hinder users (Halasz and Moran 1982). To date, the two metaphors used in graphical interfaces are the desktop and, to a lesser extent, the room.

Users generally prefer to learn new software by using it immediately rather than by reading or taking a course—they prefer action-based learning in which they are cognitively active. This type of learning does, however, present a few problems for users (Carroll and Rosson 1988; Robert 1989). It demands an interface structure which is compatible, transparent, consistent, flexible, natural-appearing and fault tolerant, and a feature set which ensures usability, feedback, help systems, navigational aides and error handling (in this context, “errors” refer to actions that users wish to undo). Effective interfaces give users some autonomy during exploration.

Developing knowledge

User knowledge develops with increasing experience, but tends to plateau rapidly. This means that interfaces must be flexible and capable of responding simultaneously to the needs of users with different levels of knowledge. Ideally, they should also be context sensitive and provide personalized help. The EdCoach system, developed by Desmarais, Giroux and Larochelle (1993) is such an interface. Classification of users into beginner, intermediate and expert categories is inadequate for the purpose of interface design, since these definitions are too static and do not account for individual variations. Information technology capable of responding to the needs of different types of users is now available, albeit at the research, rather than commercial, level (Egan 1988). The current rage for performance-support systems suggests intense development of these systems in coming years.

Unavoidable errors

Finally, it should be recognized that users make mistakes when using systems, regardless of their skill level or the quality of the system. A recent German study by Broadbeck et al. (1993) revealed that at least 10% of the time spent by white-collar workers working on computers is related to error management. One of the causes of errors is users’ reliance on correction rather than prevention strategies (Reed 1982). Users prefer acting rapidly and incurring errors that they must subsequently correct, to working more slowly and avoiding errors. It is essential that these considerations be taken into account when designing human-computer interfaces. In addition, systems should be fault tolerant and should incorporate effective error management (Lewis and Norman 1986).

Needs Analysis

Needs analysis is an explicit part of Robert and Fiset’s development cycle (1992), it corresponds to Nielsen’s functional analysis and is integrated into other stages (task, user or needs analysis) described by other authors. It consists of the identification, analysis and organization of all the needs that the computer system can satisfy. Identification of features to be added to the system occurs during this process. Task and user analysis, presented above, should help define many of the needs, but may prove inadequate for the definition of new needs resulting from the introduction of new technologies or new regulations (e.g., safety). Needs analysis fills this void.

Needs analysis is performed in the same way as functional analysis of products. It requires the participation of a group of people interested by the product and possessing complementary training, occupations or work experience. This can include future users of the system, supervisors, domain experts and, as required, specialists in training, work organization and safety. Review of the scientific and technical literature in the relevant field of application may also be performed, in order to establish the current state of the art. Competitive systems used in similar or related fields can also be studied. The different needs identified by this analysis are then classified, weighted and presented in a format appropriate for use throughout the development cycle.

Prototyping

Prototyping is part of the development cycle of most interfaces and consists of the production of a preliminary paper or electronic model (or prototype) of the interface. Several books on the role of prototyping in human-computer interaction are available (Wilson and Rosenberg 1988; Hartson and Smith 1991; Preece et al. 1994).

Prototyping is almost indispensable because:

- Users have difficulty evaluating interfaces on the basis of functional specifications—the description of the interface is too distant from the real interface, and evaluation too abstract. Prototypes are useful because they allow users to see and use the interface and directly evaluate its usefulness and usability.

- It is practically impossible to construct an adequate interface on the first try. Interfaces must be tested by users and modified, often repeatedly. To overcome this problem, paper or interactive prototypes that can be tested, modified or rejected are produced and refined until a satisfactory version is obtained. This process is considerably less expensive than working on real interfaces.

From the point of view of the development team, prototyping has several advantages. Prototypes allow the integration and visualization of interface elements early on in the design cycle, rapid identification of detailed problems, production of a concrete and common object of discussion in the development team and during discussions with clients, and simple illustration of alternative solutions for the purposes of comparison and internal evaluation of the interface. The most important advantage is, however, the possibility of having users evaluate prototypes.

Inexpensive and very powerful software tools for the production of prototypes are commercially available for a variety of platforms, including microcomputers (e.g., Visual Basic and Visual C++ (™Microsoft Corp.), UIM/X (™Visual Edge Software), HyperCard (™Apple Computer), SVT (™SVT Soft Inc.)). Readily available and relatively easy to learn, they are becoming widespread among system developers and evaluators.

The integration of prototyping completely changed the interface development process. Given the rapidity and flexibility with which prototypes can be produced, developers now tend to reduce their initial analyses of task, users and needs, and compensate for these analytical deficiencies by adopting longer evaluation cycles. This assumes that usability testing will identify problems and that it is more economical to prolong evaluation than to spend time on preliminary analysis.

Evaluation of Interfaces

User evaluation of interfaces is an indispensable and effective way to improve interfaces’ usefulness and usability (Nielsen 1993). The interface is almost always evaluated in electronic form, although paper prototypes may also be tested. Evaluation is an iterative process and is part of the prototype evaluation-modification cycle which continues until the interface is judged acceptable. Several cycles of evaluation may be necessary. Evaluation may be performed in the workplace or in usability laboratories (see the special edition of Behaviour and Information Technology (1994) for a description of several usability laboratories).

Some interface evaluation methods do not involve users; they may be used as a complement to user evaluation (Karat 1988; Nielsen 1993; Nielsen and Mack 1994). A relatively common example of such methods consists of the use of criteria such as compatibility, consistency, visual clarity, explicit control, flexibility, mental workload, quality of feedback, quality of help and error handling systems. For a detailed definition of these criteria, see Bastien and Scapin (1993); they also form the basis of an ergonomic questionnaire on interfaces (Shneiderman 1987; Ravden and Johnson 1989).

Following evaluation, solutions must be found to problems that have been identified, modifications discussed and implemented, and decisions made concerning whether a new prototype is necessary.

Conclusion

This discussion of interface development has highlighted the major stakes and broad trends in the field of human-computer interaction. In summary, (a) task, user, and needs analysis play an essential role in understanding system requirements and, by extension, necessary interface features; and (b) prototyping and user evaluation are indispensable for the determination of interface usability. An impressive body of knowledge, composed of principles, guidelines and design standards, exists on human-computer interactions. Nevertheless, it is currently impossible to produce an adequate interface on the first try. This constitutes a major challenge for the coming years. More explicit, direct and formal links must be established between analysis (task, users, needs, context) and interface design. Means must also be developed to apply current ergonomic knowledge more directly and more simply to the design of interfaces.

Psychosocial Aspects of VDU Work

Introduction

Computers provide efficiency, competitive advantages and the ability to carry out work processes that would not be possible without their use. Areas such as manufacturing process control, inventory management, records management, complex systems control and office automation have all benefited from automation. Computerization requires substantial infrastructure support in order to function properly. In addition to architectural and electrical changes needed to accommodate the machines themselves, the introduction of computerization requires changes in employee knowledge and skills, and application of new methods of managing work. The demands placed on jobs which use computers can be very different from those of traditional jobs. Often computerized jobs are more sedentary and may require more thinking and mental attention to tasks, while at the same time require less physical energy expenditure. Production demands can be high, with constant work pressure and little room for decision-making.

The economic advantages of computers at work have overshadowed associated potential health, safety and social problems for workers, such as job loss, cumulative trauma disorders and increased mental stress. The transition from more traditional forms of work to computerization has been difficult in many workplaces, and has resulted in significant psychosocial and sociotechnical problems for the workforce.

Psychosocial Problems Specific to VDUs

Research studies (for example, Bradley 1983 and 1989; Bikson 1987; Westlander 1989; Westlander and Aberg 1992; Johansson and Aronsson 1984; Stellman et al. 1987b; Smith et al. 1981 and 1992a) have documented how the introduction of computers into the workplace has brought substantial changes in the process of work, in social relationships, in management style and in the nature and content of job tasks. In the 1980s, the implementation of the technological changeover to computerization was most often a “top-down” process in which employees had no input into the decisions regarding the new technology or the new work structures. As a result, many industrial relations, physical and mental health problems arose.

Experts disagree on the success of changes that are occurring in offices, with some arguing that computer technology improves the quality of work and enhances productivity (Strassmann 1985), while others compare computers to earlier forms of technology, such as assembly-line production that also make working conditions worse and increase job stress (Moshowitz 1986; Zuboff 1988). We believe that visual display unit (VDU) technology does affect work in various ways, but technology is only one element of a larger work system that includes the individual, tasks, environment and organizational factors.

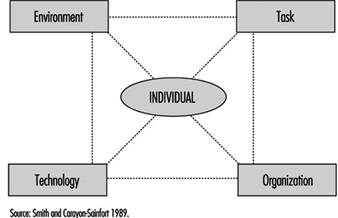

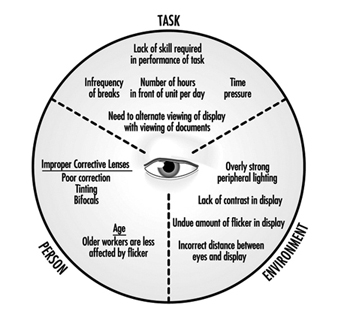

Conceptualizing Computerized Job Design

Many working conditions jointly influence the VDU user. The authors have proposed a comprehensive job design model which illustrates the various facets of working conditions which can interact and accumulate to produce stress (Smith and Carayon-Sainfort 1989). Figure 1 illustrates this conceptual model for the various elements of a work system that can exert loads on workers and may result in stress. At the centre of this model is the individual with his/her unique physical characteristics, perceptions, personality and behaviour. The individual uses technologies to perform specific job tasks. The nature of the technologies, to a large extent, determines performance and the skills and knowledge needed by the worker to use the technology effectively. The requirements of the task also affect the required skill and knowledge levels needed. Both the tasks and technologies affect the job content and the mental and physical demands. The model also shows that the tasks and technologies are placed within the context of a work setting that comprises the physical and the social environment. The overall environment itself can affect comfort, psychological moods and attitudes. Finally, the organizational structure of work defines the nature and level of individual involvement, worker interactions, and levels of control. Supervision and standards of performance are all affected by the nature of the organization.

Figure 1. Model of working conditions and their impact on the individual

This model helps to explain relationships between job requirements, psychological and physical loads and resulting health strains. It represents a systems concept in which any one element can influence any other element, and in which all elements interact to determine the way in which work is accomplished and the effectiveness of the work in achieving individual and organizational needs and goals. The application of the model to the VDU workplace is described below.

Environment

Physical environmental factors have been implicated as job stressors in the office and elsewhere. General air quality and housekeeping contribute, for example, to sick building syndrome and other stress responses (Stellman et al. 1985; Hedge, Erickson and Rubin 1992.) Noise is a well-known environmental stressor which can cause increases in arousal, blood pressure, and negative psychological mood (Cohen and Weinstein 1981). Environmental conditions that produce sensory disruption and make it more difficult to carry out tasks increase the level of worker stress and emotional irritation are other examples (Smith et al. 1981; Sauter et al. 1983b).

Task

With the introduction of computer technology, expectations regarding performance increase. Additional pressure on workers is created because they are expected to perform at a higher level all the time. Excessive workload and work pressure are significant stressors for computer users (Smith et al. 1981; Piotrkowski, Cohen and Coray 1992; Sainfort 1990). New types of work demands are appearing with the increasing use of computers. For instance, cognitive demands are likely to be sources of increased stress for VDU users (Frese 1987). These are all facets of job demands.

Electronic Monitoring of Employee Performance

The use of electronic methods to monitor employee work performance has increased substantially with the widespread use of personal computers which make such monitoring quick and easy. Monitoring provides information which can be used by employers to better manage technological and human resources. With electronic monitoring it is possible to pinpoint bottlenecks, production delays and below average (or below standard) performance of employees in real time. New electronic communication technologies have the capability of tracking the performance of individual elements of a communication system and of pinpointing individual worker inputs. Such work elements as data entry into computer terminals, telephone conversations, and electronic mail messages can all be examined through the use of electronic surveillance.

Electronic monitoring increases management control over the workforce, and may lead to organisational management approaches that are stressful. This raises important issues about the accuracy of the monitoring system and how well it represents worker contributions to the employer’s success, the invasion of worker privacy, worker versus technology control over job tasks, and the implications of management styles that use monitored information to direct worker behaviour on the job (Smith and Amick 1989; Amick and Smith 1992; Carayon 1993b). Monitoring can bring about increased production, but it may also produce job stress, absences from work, turnover in the workforce and sabotage. When electronic monitoring is combined with incentive systems for increased production, work-related stress can also be increased (OTA 1987; Smith et al. 1992a). In addition, such electronic performance monitoring raises issues of worker privacy (ILO 1991) and several countries have banned the use of individual performance monitoring.

A basic requirement of electronic monitoring is that work tasks be broken up into activities that can easily be quantified and measured, which usually results in a job design approach that reduces the content of the tasks by removing complexity and thinking, which are replaced by repetitive action. The underlying philosophy is similar to a basic principle of “Scientific Management” (Taylor 1911) that calls for work “simplification.”

In one company, for example, a telephone monitoring capability was included with a new telephone system for customer service operators. The monitoring system distributed incoming telephone calls from customers, timed the calls and allowed for supervisor eavesdropping on employee telephone conversations. This system was instituted under the guise of a work flow scheduling tool for determining the peak periods for telephone calls to determine when extra operators would be needed. Instead of using the monitoring system solely for that purpose, management also used the data to establish work performance standards, (seconds per transaction) and to bring disciplinary action against employees with “below average performance.” This electronic monitoring system introduced a pressure to perform above average because of fear of reprimand. Research has shown that such work pressure is not conducive to good performance but rather can bring about adverse health consequences (Cooper and Marshall 1976; Smith 1987). In fact, the monitoring system described was found to have increased employee stress and lowered the quality of production (Smith et al. 1992a).

Electronic monitoring can influence worker self-image and feelings of self-worth. In some cases, monitoring could enhance feelings of self-worth if the worker gets positive feedback. The fact that management has taken an interest in the worker as a valuable resource is another possible positive outcome. However, both effects may be perceived differently by workers, particularly if poor performance leads to punishment or reprimand. Fear of negative evaluation can produce anxiety and may damage self-esteem and self-image. Indeed electronic monitoring can create known adverse working conditions, such as paced work, lack of worker involvement, reduced task variety and task clarity, reduced peer social support, reduced supervisory support, fear of job loss, or routine work activities, and lack of control over tasks (Amick and Smith 1992; Carayon 1993).

Michael J. Smith

Positive aspects also exist since computers are able to do many of the simple, repetitive tasks that were previously done manually, which can reduce the repetitiveness of the job, increase the content of the job and make it more meaningful. This is not universally true, however, since many new computer jobs, such as data entry, are still repetitive and boring. Computers can also provide performance feedback that is not available with other technologies (Kalimo and Leppanen 1985), which can reduce ambiguity.

Some aspects of computerized work have been linked to decreased control, which has been identified as a major source of stress for clerical computer users. Uncertainty regarding the duration of computer-related problems, such as breakdown and slowdown, can be a source of stress (Johansson and Aronsson 1984; Carayon-Sainfort 1992). Computer-related problems can be particularly stressful if workers, such as airline reservation clerks, are highly dependent on the technology to perform their job.

Technology

The technology being used by the worker often defines his or her ability to accomplish tasks and the extent of physiological and psychological load. If the technology produces either too much or too little workload, increased stress and adverse physical health outcomes can occur (Smith et al. 1981; Johansson and Aronsson 1984; Ostberg and Nilsson 1985). Technology is changing at a rapid pace, forcing workers to adjust their skills and knowledge continuously to keep up. In addition, today’s skills can quickly become obsolete. Technological obsolescence may be due to job de-skilling and impoverished job content or to inadequate skills and training. Workers who do not have the time or resources to keep up with the technology may feel threatened by the technology and may worry about losing their job. Thus, workers’ fears of having inadequate skills to use the new technology are one of the main adverse influences of technology, which training, of course, can help to offset. Another effect of the introduction of technology is the fear of job loss due to increased efficiency of technology (Ostberg and Nilsson 1985; Smith, Carayon and Miezio 1987).

Intensive, repetitive, long sessions at the VDU can also contribute to increased ergonomic stress and strain (Stammerjohn, Smith and Cohen 1981; Sauter et al. 1983b; Smith et al. 1992b) and can create visual or musculoskeletal discomfort and disorders, as described elsewhere in the chapter.

Organizational factors

The organizational context of work can influence worker stress and health. When technology requires new skills, the way in which workers are introduced to the new technology and the organizational support they receive, such as appropriate training and time to acclimatize, has been related to the levels of stress and emotional disturbances experienced (Smith, Carayon and Miezio 1987). The opportunity for growth and promotion in a job (career development) is also related to stress (Smith et al. 1981). Job future uncertainty is a major source of stress for computer users (Sauter et al. 1983b; Carayon 1993a) and the possibility of job loss also creates stress (Smith et al. 1981; Kasl 1978).

Work scheduling, such as shift work and overtime, have been shown to have negative mental and physical health consequences (Monk and Tepas 1985; Breslow and Buell 1960). Shift work is increasingly used by companies that want or need to keep computers running continuously. Overtime is often needed to ensure that workers keep up with the workload, especially when work remains incomplete as a result of delays due to computer breakdown or misfunction.

Computers provide management with the capability to continuously monitor employee performance electronically, which has the potential to create stressful working conditions, such as by increasing work pressure (see the box “Electronic Monitoring”). Negative employee-supervisor relationships and feelings of lack of control can increase in electronically supervised workplaces.

The introduction of VDU technology has affected social relationships at work. Social isolation has been identified as a major source of stress for computer users (Lindström 1991; Yang and Carayon 1993) since the increased time spent working on computers reduces the time that workers have to socialize and receive or give social support. The need for supportive supervisors and co-workers has been well documented (House 1981). Social support can moderate the impact of other stressors on worker stress. Thus, support from colleagues, supervisor or computer staff becomes important for the worker who is experiencing computer-related problems but the computer work environment may, ironically, reduce the level of such social support available.

The individual

A number of personal factors such as personality, physical health status, skills and abilities, physical conditioning, prior experiences and learning, motives, goals and needs determine the physical and psychological effects just described (Levi 1972).

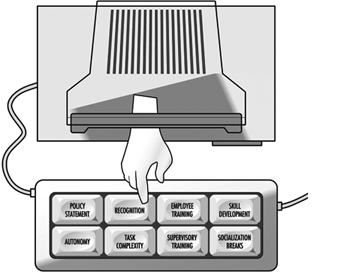

Improving the Psychosocial Characteristics of VDU Work

The first step in making VDU work less stressful is to identify work organization and job design features that can promote psychosocial problems so that they can be modified, always bearing in mind that VDU problems which can lead to job stress are seldom the result of single aspects of the organization or of job design, but rather, are a combination of many aspects of improper work design. Thus, solutions for reducing or eliminating job stress must be comprehensive and deal with many improper work design factors simultaneously. Solutions that focus on only one or two factors will not succeed. (See figure 2.)

Figure 2. Keys to reducing isolation and stress

Improvements in job design should start with the work organization providing a supportive environment for employees. Such an environment enhances employee motivation to work and feelings of security, and it reduces feelings of stress (House 1981). A policy statement that defines the importance of employees within an organization and is explicit on how the organization will provide a supportive environment is a good first step. One very effective means for providing support to employees is to provide supervisors and managers with specific training in methods for being supportive. Supportive supervisors can serve as buffers that “protect” employees from unnecessary organizational or technological stresses.

The content of job tasks has long been recognized as important for employee motivation and productivity (Herzberg 1974; Hackman and Oldham 1976). More recently the relationship between job content and job stress reactions has been elucidated (Cooper and Marshall 1976; Smith 1987). Three main aspects of job content that are of specific relevance to VDU work are task complexity, employee skills and career opportunities. In some respects, these are all related to the concept of developing the motivational climate for employee job satisfaction and psychological growth, which deals with the improvement of employees’ intellectual capabilities and skills, increased ego enhancement or self-image and increased social group recognition of individual achievement.

The primary means for enhancing job content is to increase the skill level for performing job tasks, which typically means enlarging the scope of job tasks, as well as enriching the elements of each specific task (Herzberg 1974). Enlarging the number of tasks increases the repertoire of skills needed for successful task performance, and also increases the number of employee decisions made while defining task sequences and activities. An increase in the skill level of the job content promotes employee self-image of personal worth and of value to the organization. It also enhances the positive image of the individual in his or her social work group within the organization.

Increasing the complexity of the tasks, which means increasing the amount of thinking and decision-making involved, is a logical next step that can be achieved by combining simple tasks into sets of related activities that have to be coordinated, or by adding mental tasks that require additional knowledge and computational skills. Specifically, when computerized technology is introduced, new tasks in general will have requirements that exceed the current knowledge and skills of the employees who are to perform them. Thus there is a need to train employees in the new aspects of the tasks so that they will have the skills to perform the tasks adequately. Such training has more than one benefit, since it not only may improve employee knowledge and skills, and thus enhance performance, but may also enhance employee self-esteem and confidence. Providing training also shows the employee that the employer is willing to invest in his or her skill enhancement, and thus promotes confidence in employment stability and job future.

The amount of control that an employee has over the job has a powerful psychosocial influence (Karasek et al. 1981; Sauter, Cooper and Hurrell 1989). Important aspects of control can be defined by the answers to the questions, “What, how and when?” The nature of the tasks to be undertaken, the need for coordination among employees, the methods to be used to carry out the tasks and the scheduling of the tasks can all be defined by answers to these questions. Control can be designed into jobs at the levels of the task, the work unit and the organization (Sainfort 1991; Gardell 1971). At the task level, the employee can be given autonomy in the methods and procedures used in completing the task.

At the work-unit level, groups of employees can self-manage several interrelated tasks and the group itself can decide on who will perform particular tasks, the scheduling of tasks, coordination of tasks and production standards to meet organizational goals. At the organization level, employees can participate in structured activities that provide input to management about employee opinions or quality improvement suggestions. When the levels of control available are limited, it is better to introduce autonomy at the task level and then work up the organizational structure, insofar as possible (Gardell 1971).

One natural result of computer automation appears to be an increased workload, since the purpose of the automation is to enhance the quantity and quality of work output. Many organizations believe that such an increase is necessary in order to pay for the investment in the automation. However, establishing the appropriate workload is problematic. Scientific methods have been developed by industrial engineers for determining appropriate work methods and workloads (the performance requirements of jobs). Such methods have been used successfully in manufacturing industries for decades, but have had little application in office settings, even after office computerization. The use of scientific means, such as those described by Kanawaty (1979) and Salvendy (1992), to establish workloads for VDU operators, should be a high priority for every organization, since such methods set reasonable production standards or work output requirements, help to protect employees from excessive workloads, as well as help to ensure the quality of products.

The demand that is associated with the high levels of concentration required for computerized tasks can diminish the amount of social interaction during work, leading to social isolation of employees. To counter this effect, opportunities for socialization for employees not engaged in computerized tasks, and for employees who are on rest breaks, should be provided. Non-computerized tasks which do not require extensive concentration could be organized in such a way that employees can work in close proximity to one another and thus have the opportunity to talk among themselves. Such socialization provides social support, which is known to be an essential modifying factor in reducing adverse mental health effects and physical disorders such as cardiovascular diseases (House 1981). Socialization naturally also reduces social isolation and thus promotes improved mental health.

Since poor ergonomic conditions can also lead to psychosocial problems for VDU users, proper ergonomic conditions are an essential element of complete job design. This is covered in some detail in other articles in this chapter and elsewhere in the Encyclopaedia.

Finding Balance

Since there are no “perfect” jobs or “perfect” workplaces free from all psychosocial and ergonomic stressors, we must often compromise when making improvements at the workplace. Redesigning processes generally involves “trade-offs” between excellent working conditions and the need to have acceptable productivity. This requires us to think about how to achieve the best “balance” between positive benefits for employee health and productivity. Unfortunately, since so many factors can produce adverse psychosocial conditions that lead to stress, and since these factors are interrelated, modifications in one factor may not be beneficial if concomitant changes are not made in other related factors. In general, two aspects of balance should be addressed: the balance of the total system and compensatory balance.

System balance is based on the idea that a workplace or process or job is more than the sum of the individual components of the system. The interplay among the various components produces results that are greater (or less) than the sum of the individual parts and determines the potential for the system to produce positive results. Thus, job improvements must take account of and accommodate the entire work system. If an organization concentrates solely on the technological component of the system, there will be an imbalance because personal and psychosocial factors will have been neglected. The model given in figure 1 of the work system can be used to identify and understand the relationships between job demands, job design factors, and stress which must be balanced.

Since it is seldom possible to eliminate all psychosocial factors that cause stress, either because of financial considerations, or because it is impossible to change inherent aspects of job tasks, compensatory balance techniques are employed. Compensatory balance seeks to reduce psychological stress by changing aspects of work that can be altered in a positive direction to compensate for those aspects that cannot be changed. Five elements of the work system—physical loads, work cycles, job content, control, and socialization—function in concert to provide the resources for achieving individual and organizational goals through compensatory balance. While we have described some of the potential negative attributes of these elements in terms of job stress, each also has positive aspects that can counteract the negative influences. For instance, inadequate skill to use new technology can be offset by employee training. Low job content that creates repetition and boredom can be balanced by an organizational supervisory structure that promotes employee involvement and control over tasks, and job enlargement that introduces task variety. The social conditions of VDU work could be improved by balancing the loads that are potentially stressful and by considering all of the work elements and their potential for promoting or reducing stress. The organizational structure itself could be adapted to accommodate enriched jobs in order to provide support to the individual. Increased staffing levels, increasing the levels of shared responsibilities or increasing the financial resources put toward worker well-being are other possible solutions.

Skin Problems

The first reports of skin complaints among people working with or near VDUs came from Norway as early as 1981. A few cases have also been reported from the United Kingdom, the United States and Japan. Sweden, however, has provided many case reports and public discussion on the health effects of exposure to VDUs was intensified when one case of skin disease in a VDU worker was accepted as an occupational disease by the Swedish National Insurance Board in late 1985. The acceptance of this case for compensation coincided with a marked increase in the number of cases of skin disease that were suspected to be related to work with VDUs. At the Department of Occupational Dermatology at Karolinska Hospital, Stockholm, the caseload increased from seven cases referred between 1979 and 1985, to 100 new referrals from November 1985 to May 1986.

Despite the relatively large number of people who sought medical treatment for what they believed to be VDU-related skin problems, no conclusive evidence is available which shows that the VDUs themselves lead to the development of occupational skin disease. The occurrence of skin disease in VDU-exposed people appears to be coincidental or possibly related to other workplace factors. Evidence for this conclusion is strengthened by the observation that the increased incidence of skin complaints made by Swedish VDU workers has not been observed in other countries, where the mass media debate on the issue has not been as intense. Further, scientific data collected from provocation studies, in which patients have been purposely exposed to VDU-related electromagnetic fields to determine whether a skin effect could be induced, have not produced any meaningful data demonstrating a possible mechanism for development of skin problems which could be related to the fields surrounding a VDU.

Case Studies: Skin Problems and VDUs

Sweden: 450 patients were referred and examined for skin problems which they attributed to work at VDUs. Only common facial dermatoses were found and no patients had specific dermatoses that could be related to work with VDUs. While most patients felt that they had pronounced symptoms, their visible skin lesions were, in fact, mild according to standard medical definitions and most of the patients reported improvement without drug therapy even though they continued to work with VDUs . Many of the patients were suffering from identifiable contact allergies, which explained their skin symptoms . Epidemiological studies comparing the VDU-work patients to a non-exposed control population with a similar skin status showed no relationship between skin status and VDU work. Finally, a provocation study did not yield any relation between the patient symptoms and electrostatic or magnetic fields from the VDUs (Wahlberg and Lidén 1988; Berg 1988; Lidén 1990; Berg, Hedblad and Erhardt 1990; Swanbeck and Bleeker 1989).In contrast to a few early nonconclusive epidemiological studies (Murray et al. 1981; Frank 1983; Lidén and Wahlberg 1985), a large-scale epidemiological study (Berg, Lidén, and Axelson 1990; Berg 1989) of 3,745 randomly selected office employees, of whom 809 persons were medically examined, showed that while the VDU-exposed employees reported significantly more skin problems than a nonexposed control population of office employees, upon examination, they were not actually found to have no more visible signs or more skin disease.

Wales (UK): A questionnaire study found no difference between reports of skin problems in VDU workers and a control population (Carmichael and Roberts 1992).

Singapore: A control population of teachers reported significantly more skin complaints than did the VDU users (Koh et al. 1991).

It is, however, possible that work-related stress could be an important factor that can explain VDU-associated skin complaints. For example, follow-up studies in the office environment of a subgroup of the VDU-exposed office employees being studied for skin problems showed that significantly more people in the group with skin symptoms experienced extreme occupational stress than people without the skin symptoms. A correlation between levels of the stress-sensitive hormones testosterone, prolactin and thyroxin and skin symptoms were observed during work, but not during days off. Thus, one possible explanation for VDU-associated facial skin sensations could be the effects of thyroxin, which causes the blood vessels to dilate (Berg et al. 1992).

Musculoskeletal Disorders

Introduction

VDU operators commonly report musculoskeletal problems in the neck, shoulders and upper limbs. These problems are not unique to VDU operators and are also reported by other workers performing tasks which are repetitive or which involve holding the body in a fixed posture (static load). Tasks which involve force are also commonly associated with musculoskeletal problems, but such tasks are not generally an important health and safety consideration for VDU operators.

Among clerical workers, whose jobs are generally sedentary and not commonly associated with physical stress, the introduction into workplaces of VDUs caused work-related musculoskeletal problems to gain in recognition and prominence. Indeed, an epidemic-like increase in reporting of problems in Australia in the mid 1980s and, to a lesser extent, in the United States and the United Kingdom in the early 1990s, has led to a debate about whether or not the symptoms have a physiological basis and whether or not they are work-related.

Those who dispute that musculoskeletal problems associated with VDU (and other) work have a physiological basis generally put forward one of four alternative views: workers are malingering; workers are unconsciously motivated by various possible secondary gains, such as workers’ compensation payments or the psychological benefits of being sick, known as compensation neurosis; workers are converting unresolved psychological conflict or emotional disturbance into physical symptoms, that is, conversion disorders; and finally, that normal fatigue is being blown out of proportion by a social process which labels such fatigue as a problem, termed social iatrogenesis. Rigorous examination of the evidence for these alternative explanations shows that they are not as well supported as explanations which posit a physiological basis for these disorders (Bammer and Martin 1988). Despite the growing evidence that there is a physiological basis for musculoskeletal complaints, the exact nature of the complaints is not well understood (Quintner and Elvey 1990; Cohen et al. 1992; Fry 1992; Helme, LeVasseur and Gibson 1992).

Symptom Prevalence

A large number of studies have documented the prevalence of musculoskeletal problems among VDU operators and these have been predominantly conducted in western industrialized countries. There is also growing interest in these problems in the rapidly industrializing nations of Asia and Latin America. There is considerable inter-country variation in how musculoskeletal disorders are described and in the types of studies carried out. Most studies have relied on symptoms reported by workers, rather than on the results of medical examinations. The studies can be usefully divided into three groups: those which have examined what can be called composite problems, those which have looked at specific disorders and those which have concentrated on problems in a single area or small group of areas.

Composite problems

Composite problems are a mixture of problems, which can include pain, loss of strength and sensory disturbance, in various parts of the upper body. They are treated as a single entity, which in Australia and the United Kingdom is referred to as repetitive strain injuries (RSI), in the United States as cumulative trauma disorders (CTD) and in Japan as occupational cervicobrachial disorders (OCD). A 1990 review (Bammer 1990) of problems among office workers (75% of the studies were of office workers who used VDUs) found that 70 studies had examined composite problems and 25 had found them to occur in a frequency range of between 10 and 29% of the workers studied. At the extremes, three studies had found no problems, while three found that 80% of workers suffer from musculoskeletal complaints. Half of the studies also reported on severe or frequent problems, with 19 finding a prevalence between 10 and 19%. One study found no problems and one found problems in 59%. The highest prevalences were found in Australia and Japan.

Specific disorders

Specific disorders cover relatively well-defined problems such as epicondylitis and carpal tunnel syndrome. Specific disorders have been less frequently studied and found to occur less frequently. Of 43 studies, 20 found them to occur in between 0.2 and 4% of workers. Five studies found no evidence of specific disorders and one found them in between 40–49% of workers.

Particular body parts

Other studies focus on particular areas of the body, such as the neck or the wrists. Neck problems are the most common and have been examined in 72 studies, with 15 finding them to occur in between 40 and 49% of workers. Three studies found them to occur in between 5 and 9% of workers and one found them in more than 80% of workers. Just under half the studies examined severe problems and they were commonly found in frequencies that ranged between 5% and 39%. Such high levels of neck problems have been found internationally, including Australia, Finland, France, Germany, Japan, Norway, Singapore, Sweden, Switzerland, the United Kingdom and the United States. In contrast, only 18 studies examined wrist problems, and seven found them to occur in between 10% and 19% of workers. One found them to occur in between 0.5 and 4% of workers and one in between 40% and 49%.

Causes

It is generally agreed that the introduction of VDUs is often associated with increased repetitive movements and increased static load through increased keystroke rates and (compared with typewriting) reduction in non-keying tasks such as changing paper, waiting for the carriage return and use of correction tape or fluid. The need to watch a screen can also lead to increased static load, and poor placement of the screen, keyboard or function keys can lead to postures which may contribute to problems. There is also evidence that the introduction of VDUs can be associated with reductions in staff numbers and increased workloads. It can also lead to changes in the psychosocial aspects of work, including social and power relationships, workers’ responsibilities, career prospects and mental workload. In some workplaces such changes have been in directions which are beneficial to workers.

In other workplaces they have led to reduced worker control over the job, lack of social support on the job, “de-skilling”, lack of career opportunities, role ambiguity, mental stress and electronic monitoring (see review by Bammer 1987b and also WHO 1989 for a report on a World Health Organization meeting). The association between some of these psychosocial changes and musculoskeletal problems is outlined below. It also seems that the introduction of VDUs helped stimulate a social movement in Australia which led to the recognition and prominence of these problems (Bammer and Martin 1992).

Causes can therefore be examined at individual, workplace and social levels. At the individual level, the possible causes of these disorders can be divided into three categories: factors not related to work, biomechanical factors and work organization factors (see table 1). Various approaches have been used to study causes but the overall results are similar to those obtained in empirical field studies which have used multivariate analyses (Bammer 1990). The results of these studies are summarized in table 1 and table 2. More recent studies also support these general findings.

Table 1. Summary of empirical fieldwork studies which have used multivariate analyses to study the causes of musculoskeletal problems among office workers

|

Factors |

||||

|

|

|

|

|

Work organisation |

|

Blignault (1985) |

146/90% |

ο |

ο |

● |

|

South Australian Health Commission Epidemiology Branch (1984) |

456/81% |

●

|

●

|

●

|

|

Ryan, Mullerworth and Pimble (1984) |

52/100% |

● |

●

|

●

|

|

Ryan and |

143 |

|||

|

Ellinger et al. (1982) |

280 |

● |

●

|

● |

|

Pot, Padmos and |

222/100% |

not studied |

● |

● |

|

Sauter et al. (1983b) |

251/74% |

ο |

●

|

● |

|

Stellman et al. (1987a) |

1, 032/42% |

not studied |

●

|

● |

ο = non-factor ●= factor.

Source: Adapted from Bammer 1990.

Table 2. Summary of studies showing involvement of factors thought to cause musculoskeletal problems among office workers

|

Non-work |

Biomechanical |

Work organization |

|||||||||||||

|

Country |

No./% VDU |

Age |

Biol. |

Neuro ticism |

Joint |

Furn. |

Furn. |

Visual |

Visual |

Years |

Pressure |

Autonomy |

Peer |

Variety |

Key- |

|

Australia |

146/ |

Ø |

Ø |

Ø |

Ø |

Ο |

● |

● |

● |

Ø |

|||||

|

Australia |

456/ |

● |

Ο |

❚ |

Ø |

Ο |

● |

Ο |

|||||||

|

Australia |

52/143/ |

▲ |

❚ |

❚ |

Ο |

Ο |

● |

Ο |

|||||||

|

Germany |

280 |

Ο |

Ο |

❚ |

Ø |

❚ |

Ο |

Ο |

● |

● |

Ο |

||||

|

Netherlands |

222/ |

❚ |

❚ |

Ø |

Ø |

Ο |

● |

(Ø) |

Ο |

||||||

|

United States |

251/ |

Ø |

Ø |

❚ |

❚ |

Ο |

● |

(Ø) |

●

|

||||||

|

United States |

1,032/ |

Ø |

❚ |

❚ |

Ο |

● |

● |

||||||||

Ο = positive association, statistically significant. ● = negative association, statistically significant. ❚ = statistically significant association. Ø = no statistically significant association. (Ø) = no variability in the factor in this study. ▲ = the youngest and the oldest had more symptoms.

Empty box implies that the factor was not included in this study.

1 Matches references in table 52.7.

Source: adapted from Bammer 1990.

Factors not related to work

There is very little evidence that factors not related to work are important causes of these disorders, although there is some evidence that people with a previous injury to the relevant area or with problems in another part of the body may be more likely to develop problems. There is no clear evidence for involvement of age and the one study which examined neuroticism found that it was not related.

Biomechanical factors

There is some evidence that working with certain joints of the body at extreme angles is associated with musculoskeletal problems. The effects of other biomechanical factors are less clear-cut, with some studies finding them to be important and others not. These factors are: assessment of the adequacy of the furniture and/or equipment by the investigators; assessment of the adequacy of the furniture and/or equipment by the workers; visual factors in the workplace, such as glare; personal visual factors, such as the use of spectacles; and years on the job or as an office worker (table 2).

Organizational factors

A number of factors related to work organization are clearly associated with musculoskeletal problems and are discussed more fully elsewhere is this chapter. Factors include: high work pressure, low autonomy (i.e., low levels of control over work), low peer cohesion (i.e., low levels of support from other workers) which may mean that other workers cannot or do not help out in times of pressure, and low task variety.

The only factor which was studied for which results were mixed was hours using a keyboard (table 2). Overall it can be seen that the causes of musculoskeletal problems on the individual level are multifactorial. Work-related factors, particularly work organization, but also biomechanical factors, have a clear role. The specific factors of importance may vary from workplace to workplace and person to person, depending on individual circumstances. For example, the large-scale introduction of wrist rests into a workplace when high pressure and low task variety are hallmarks is unlikely to be a successful strategy. Alternatively, a worker with satisfactory delineation and variety of tasks may still develop problems if the VDU screen is placed at an awkward angle.

The Australian experience, where there was a decline in prevalence of reporting of musculoskeletal problems in the late 1980s, is instructive in indicating how the causes of these problems can be dealt with. Although this has not been documented or researched in detail, it is likely that a number of factors were associated with the decline in prevalence. One is the widespread introduction into workplaces of “ergonomically” designed furniture and equipment. There were also improved work practices including multiskilling and restructuring to reduce pressure and increase autonomy and variety. These often occurred in conjunction with the implementation of equal employment opportunity and industrial democracy strategies. There was also widespread implementation of prevention and early intervention strategies. Less positively, some workplaces seem to have increased their reliance on casual contract workers for repetitive keyboard work. This means that any problems would not be linked to the employer, but would be solely the worker’s responsibility.

In addition, the intensity of the controversy surrounding these problems led to their stigmatization, so that many workers have become more reluctant to report and claim compensation when they develop symptoms. This was further exacerbated when workers lost cases brought against employers in well-publicized legal proceedings. A decrease in research funding, cessation in publication of incidence and prevalence statistics and of research papers about these disorders, as well as greatly reduced media attention to the problem all helped shape a perception that the problem had gone away.

Conclusion

Work-related musculoskeletal problems are a significant problem throughout the world. They represent enormous costs at the individual and social levels. There are no internationally accepted criteria for these disorders and there is a need for an international system of classification. There needs to be an emphasis on prevention and early intervention and this needs to be multifactorial. Ergonomics should be taught at all levels from elementary school to university and there need to be guidelines and laws based on minimum requirements. Implementation requires commitment from employers and active participation from employees (Hagberg et al. 1993).

Despite the many recorded cases of people with severe and chronic problems, there is little available evidence of successful treatments. There is also little evidence of how rehabilitation back into the workforce of workers with these disorders can be most successfully undertaken. This highlights that prevention and early intervention strategies are paramount to the control of work-related musculoskeletal problems.

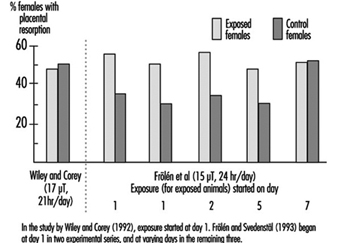

Reproductive Effects - Human Evidence

The safety of visual display units (VDUs) in terms of reproductive outcomes has been questioned since the widespread introduction of VDUs in the work environment during the 1970s. Concern for adverse pregnancy outcomes was first raised as a result of numerous reports of apparent clusters of spontaneous abortion or congenital malformations among pregnant VDU operators (Blackwell and Chang 1988). While these reported clusters were determined to be no more than what could be expected by chance, given the widespread use of VDUs in the modern workplace (Bergqvist 1986), epidemiologic studies were undertaken to explore this question further.

From the published studies reviewed here, a safe conclusion would be that, in general, working with VDUs does not appear to be associated with an excess risk of adverse pregnancy outcomes. However, this generalized conclusion applies to VDUs as they are typically found and used in offices by female workers. If, however, for some technical reason, there existed a small proportion of VDUs which did induce a strong magnetic field, then this general conclusion of safety could not be applied to that special situation since it is unlikely that the published studies would have had the statistical ability to detect such an effect. In order to be able to have generalizable statements of safety, it is essential that future studies be carried out on the risk of adverse pregnancy outcomes associated with VDUs using more refined exposure measures.

The most frequently studied reproductive outcomes have been:

- Spontaneous abortion (10 studies): usually defined as a hospitalized unintentional cessation of pregnancy occurring before 20 weeks of gestation.

- Congenital malformation (8 studies): many different types were assessed, but in general, they were diagnosed at birth.

- Other outcomes (8 studies) such as low birthweight (under 2,500 g), very low birthweight (under 1,500 g), and fecundability (time to pregnancy from cessation of birth control use) have also been assessed. See table 1.

Table 1. VDU use as a factor in adverse pregnancy outcomes

|

Objectives |

Methods |

Results |

|||||

|

Study |

Outcome |

Design |

Cases |

Controls |

Exposure |

OR/RR (95% CI) |

Conclusion |

|

Kurppa et al. |

Congenital malformation |

Case-control |

1, 475 |

1, 475 same age, same delivery date |

Job titles, |

235 cases, |

No evidence of increased risk among women who reported exposure to VDU or among women whose job titles indicated possible exposure |

|

Ericson and Källén (1986) |

Spontaneous abortion, |

Case-case |

412 |

1, 032 similar age and from same registry |

Job titles |

1.2 (0.6-2.3) |

The effect of VDU use was not statistically significant |

|

Westerholm and Ericson |

Stillbirth, |

Cohort |

7 |

4, 117 |

Job titles |

1.1 (0.8-1.4) |

No excesses were found for any of the studied outcomes. |

|

Bjerkedal and Egenaes (1986) |

Stillbirth, |

Cohort |

17 |

1, 820 |

Employment records |

NR(NS) |

The study concluded that there was no indication that introduction of VDUs in the centre has led to any increase in the rate of adverse pregnancy outcomes. |

|

Goldhaber, Polen and Hiatt |

Spontaneous abortion, |

Case-control |

460 |

1, 123 20% of all normal births, same region, same time |

Postal questionnaire |

1.8 (1.2-2.8) |

Statistically increased risk for spontaneous abortions for VDU exposure. No excess risk for congenital malformations associates with VDU exposure. |

|

McDonald et al. (1988) |

Spontaneous abortion, |

Cohort |

776 |

Face-to-face interviews |

1.19 (1.09-1.38) |

No increase in risk was found among women exposed to VDUs. |

|

|

Nurminen and Kurppa (1988) |

Threatened abortion, |

Cohort |

239 |

Face-to-face interviews |

0.9 |

The crude and adjusted rate ratios did not show statistically significant effects for working with VDUs. |

|

|

Bryant and Love (1989) |

Spontaneous abortion |

Case-control |

344 |

647 |

Face-to-face interviews |

1.14 (p = 0.47) prenatal |

VDU use was similar between the cases and both the prenatal controls and postnatal controls. |

|

Windham et al. (1990) |

Spontaneous abortion, |

Case-control |

626 |

1,308 same age, same last menstrual period |

Telephone interviews |

1.2 (0.88-1.6) |

Crude odds ratios for spontaneous abortion and VDU use less than 20 hours per week were 1.2; 95% CI 0.88-1.6, minimum of 20 hours per week were 1.3; 95% CI 0.87-1.5. Risks for low birthweight and intra-uterine growth retardation were not significantly elevated. |

|

Brandt and |

Congenital malformation |

Case-control |

421 |

1,365; 9.2% of all pregnancies, same registry |

Postal questionnaire |

0.96 (0.76-1.20) |

Use of VDUs during pregnancy was not associated with a risk of congenital malformations. |

|

Nielsen and |

Spontaneous abortion |

Case-control |

1,371 |

1,699 9.2% |

Postal questionnaire |

0.94 (0.77-1.14) |

No statistically significant risk for spontaneous abortion with VDU exposure. |

|

Tikkanen and Heinonen |

Cardiovascular malformations |

Case-control |

573 |

1,055 same time, hospital delivery |

Face-to-face interviews |

Cases 6.0%, controls 5.0% |

No statistically significant association between VDU use and cardiovascular malformation |

|

Schnorr et al. |

Spontaneous abortion |

Cohort |

136 |

746 |

Company records measurement of magnetic field |

0.93 (0.63-1.38) |

No excess risk for women who used VDUs during first trimester and no apparent |

|

Brandt and |

Time to pregnancy |

Cohort |

188 |

Postal questionnaire |

1.61 (1.09-2.38) |

For a time to pregnancy of greater than 13 months, there was an increased relative risk for the group with at least 21 hours of weekly VDU use. |

|

|

Nielsen and |

Low birthweight, |

Cohort |

434 |

Postal questionnaire |

0.88 (0.67-1.66) |

No increase in risk was found among women exposed to VDUs. |

|

|

Roman et al. |

Spontaneous abortion |

Case-control |

150 |

297 nulliparous hospital |

Face-to-face interviews |

0.9 (0.6-1.4) |

No relation to time spent using VDUs. |

|

Lindbohm |

Spontaneous abortion |

Case-control |

191 |

394 medical registers |

Employment records field measurement |

1.1 (0.7-1.6), |

Comparing workers with exposure to high magnetic field strengths to those with undetectable levels the ratio was 3.4 (95% CI 1.4-8.6) |